Practice Free SAP-C02 Exam Online Questions

A company has an online learning platform that teaches data science. The platform uses the AWS Cloud to provision on-demand lab environments for its students. Each student receives a dedicated AWS account for a short time. Students need access to ml.p2.xlarge instances to run a single Amazon SageMaker machine learning training job and to deploy the inference endpoint. Account provisioning is automated. The accounts are members of an organization in AWS Organizations with all features enabled. The accounts must be provisioned in the ap-southeast-2 Region. The default resource usage quotas are not sufficient for the accounts. A solutions architect must enhance the account provisioning process to include automated quota increases.

Which solution will meet these requirements?

- A . Create a quota request template in the us-east-1 Region in the organization’s management account. Enable template association. Add a quota for SageMaker in ap-southeast-2 for ml.p2.xlarge training job usage. Set the desired quota to 1. Add a quota for SageMaker in ap-southeast-2 for ml.p2.xlarge endpoint usage. Set the desired quota to 1.

- B . Create a quota request template in the us-east-1 Region in the organization’s management account. Enable template association. Add a quota for SageMaker in ap-southeast-2 for ml.p2.xlarge training warm pool usage. Set the desired quota to 2.

- C . Create a quota request template in ap-southeast-2 in the organization’s management account. Enable template association. Add a quota for SageMaker in the us-east-1 Region for ml.p2.xlarge training job usage. Set the desired quota to 1. Add a quota for SageMaker in us-east-1 for ml.p2.xlarge endpoint usage. Set the desired quota to 1.

- D . Create a quota request template in ap-southeast-2 in the organization’s management account. Enable template association. Add a quota for SageMaker in the us-east-1 Region for ml.p2.xlarge training warm pool usage. Set the desired quota to 2.

A

Explanation:

Creating a quota request template in the us-east-1 Region of the management account ensures it applies to all newly provisioned accounts in the organization.

By specifying the required ml.p2.xlarge training job and endpoint usage quotas in ap-southeast-2, the accounts will automatically receive these higher quotas upon creation, supporting the student lab environments without additional manual quota requests.

This process integrates seamlessly with AWS Organizations for automated and standardized account provisioning.

A company runs a customer service center that accepts calls and automatically sends all customers a managed, interactive, two-way experience survey by text message.

The applications that support the customer service center run on machines that the company hosts in an on-premises data center. The hardware that the company uses is old, and the company is experiencing downtime with the system. The company wants to migrate the system to AWS to improve reliability.

Which solution will meet these requirements with the LEAST ongoing operational overhead?

- A . Use Amazon Connect to replace the old call center hardware. Use Amazon Pinpoint to send text message surveys to customers.

- B . Use Amazon Connect to replace the old call center hardware. Use Amazon Simple Notification Service (Amazon SNS) to send text message surveys to customers.

- C . Migrate the call center software to Amazon EC2 instances that are in an Auto Scaling group. Use the EC2 instances to send text message surveys to customers.

- D . Use Amazon Pinpoint to replace the old call center hardware and to send text message surveys to customers.

A

Explanation:

Amazon Connect is a cloud-based contact center service that allows you to set up a virtual call center for your business. It provides an easy-to-use interface for managing customer interactions through voice and chat. Amazon Connect integrates with other AWS services, such as Amazon S3 and Amazon Kinesis, to help you collect, store, and analyze customer data for insights into customer behavior and trends. On the other hand, Amazon Pinpoint is a marketing automation and analytics service that allows you to engage with your customers across different channels, such as email, SMS, push notifications, and voice. It helps you create personalized campaigns based on user behavior and enables you to track user engagement and retention. While both services allow you to communicate with your customers, they serve different purposes. Amazon Connect is focused on customer support and service, while Amazon Pinpoint is focused on marketing and engagement.

A delivery company needs to migrate its third-party route planning application to AWS. The third party supplies a supported Docker image from a public registry. The image can run in as many containers as required to generate the route map.

The company has divided the delivery area into sections with supply hubs so that delivery drivers travel the shortest distance possible from the hubs to the customers. To reduce the time necessary to generate route maps, each section uses its own set of Docker containers with a custom configuration that processes orders only in the section’s area.

The company needs the ability to allocate resources cost-effectively based on the number of running containers.

Which solution will meet these requirements with the LEAST operational overhead?

- A . Create an Amazon Elastic Kubernetes Service (Amazon EKS) cluster on Amazon EC2. Use the Amazon EKS CLI to launch the planning application in pods by using the -tags option to assign a custom tag to the pod.

- B . Create an Amazon Elastic Kubernetes Service (Amazon EKS) cluster on AWS Fargate. Use the Amazon EKS CLI to launch the planning application. Use the AWS CLI tag-resource API call to assign a custom tag to the pod.

- C . Create an Amazon Elastic Container Service (Amazon ECS) cluster on Amazon EC2. Use the AWS CLI with run-tasks set to true to launch the planning application by using the -tags option to assign a custom tag to the task.

- D . Create an Amazon Elastic Container Service (Amazon ECS) cluster on AWS Fargate. Use the AWS CLI run-task command and set enableECSManagedTags to true to launch the planning application. Use the –tags option to assign a custom tag to the task.

D

Explanation:

Amazon Elastic Container Service (ECS) on AWS Fargate is a fully managed service that allows you to run containers without having to manage the underlying infrastructure. When you launch tasks on Fargate, resources are automatically allocated based on the number of tasks running, which reduces the operational overhead.

Using ECS on Fargate allows you to assign custom tags to tasks using the –tags option in the run-task command, as described in the documentation: https://docs.aws.amazon.com/cli/latest/reference/ecs/run-task.htmlYou can also set enableECSManagedTags to true, which allows the service to automatically add the cluster name and service name as tags. https://docs.aws.amazon.com/AmazonECS/latest/developerguide/task-placement-constraints.html#tag-based-scheduling

A company is in the process of implementing AWS Organizations to constrain its developers to use only Amazon EC2. Amazon S3 and Amazon DynamoDB. The developers account resides. In a dedicated organizational unit (OU).

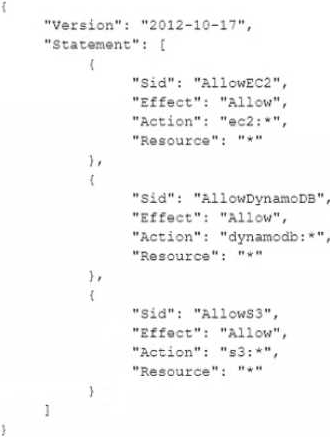

The solutions architect has implemented the following SCP on the developers account:

When this policy is deployed, IAM users in the developers account are still able to use AWS services that are not listed in the policy.

What should the solutions architect do to eliminate the developers’ ability to use services outside the scope of this policy?

- A . Create an explicit deny statement for each AWS service that should be constrained

- B . Remove the Full AWS Access SCP from the developer account’s OU

- C . Modify the Full AWS Access SCP to explicitly deny all services

- D . Add an explicit deny statement using a wildcard to the end of the SCP

B

Explanation:

https://docs.aws.amazon.com/organizations/latest/userguide/orgs_manage_policies_inheritance_auth.html

A company has an application that runs on a fleet of Amazon EC2 instances behind an Application Load Balancer (ALB). The application is in an AWS account that has AWS CloudTrail enabled. The company restricts access to the application by adding the IP addresses of end users to a security group that is associated with the ALB.

The company is developing an AWS Lambda function to determine if the allowed IP addresses have accessed the application recently. If an allowed IP address has not accessed the application in the last 90 days, the Lambda function will remove the IP address from the security group.

The company needs to implement the functionality for the Lambda function to check the IP addresses.

Which combination of steps will provide this functionality MOST cost-effectively? (Select TWO.)

- A . For the VPC that contains the ALB, configure VPC flow logs to be sent to a log group in Amazon CloudWatch Logs.

- B . Enable access logging on the ALB. Create an Amazon Athena table to query the ALB access logs.

- C . Program the Lambda function to check when each allowed IP address from the security group last appeared in the VPC flow logs.

- D . Program the Lambda function to check when each allowed IP address from the security group last appeared in the ALB access logs.

- E . Program the Lambda function to check when each allowed IP address from the security group last appeared in the CloudTrail logs.

B,D

Explanation:

B is correct because ALB access logs contain the exact IP addresses of all incoming client requests, and storing these logs in S3 enables historical analysis. Using Amazon Athena to query them offers an efficient, serverless way to check when each IP last accessed the application.

D is correct because parsing ALB access logs gives direct evidence of activity from IP addresses ― ideal for implementing the "last accessed in 90 days" rule.

A and C refer to VPC flow logs, which only capture network metadata, not HTTP request-level details like the ALB logs do.

E is incorrect ― CloudTrail logs AWS API actions, not web requests through an ALB.

Reference: Access logs for your Application Load Balancer

Querying ALB logs with Amazon Athena

A finance company hosts a data lake in Amazon S3. The company receives financial data records over SFTP each night from several third parties. The company runs its own SFTP server on an Amazon EC2 instance in a public subnet of a VPC. After the files ate uploaded, they are moved to the data lake by a cron job that runs on the same instance. The SFTP server is reachable on DNS sftp.examWe.com through the use of Amazon Route 53.

What should a solutions architect do to improve the reliability and scalability of the SFTP solution?

- A . Move the EC2 instance into an Auto Scaling group. Place the EC2 instance behind an Application Load Balancer (ALB). Update the DNS record sftp.example.com in Route 53 to point to the ALB.

- B . Migrate the SFTP server to AWS Transfer for SFTP. Update the DNS record sftp.example.com in Route 53 to point to the server endpoint hostname.

- C . Migrate the SFTP server to a file gateway in AWS Storage Gateway. Update the DNS record sflp.example.com in Route 53 to point to the file gateway endpoint.

- D . Place the EC2 instance behind a Network Load Balancer (NLB). Update the DNS record sftp.example.com in Route 53 to point to the NLB.

B

Explanation:

https://aws.amazon.com/aws-transfer-family/faqs/

https://docs.aws.amazon.com/transfer/latest/userguide/what-is-aws-transfer-family.html

https://aws.amazon.com/about-aws/whats-new/2018/11/aws-transfer-for-sftp-fully-managed-sftp-for-s3/?nc1=h_ls

A company runs an loT application in the AWS Cloud. The company has millions of sensors that collect data from houses in the United States. The sensors use the MOTT protocol to connect and send data to a custom MQTT broker. The MQTT broker stores the data on a single Amazon EC2 instance. The sensors connect to the broker through the domain named iot.example.com. The company uses Amazon Route 53 as its DNS service. The company stores the data in Amazon DynamoDB.

On several occasions, the amount of data has overloaded the MOTT broker and has resulted in lost

sensor data. The company must improve the reliability of the solution.

Which solution will meet these requirements?

- A . Create an Application Load Balancer (ALB) and an Auto Scaling group for the MOTT broker. Use the Auto Scaling group as the target for the ALB. Update the DNS record in Route 53 to an alias record. Point the alias record to the ALB. Use the MQTT broker to store the data.

- B . Set up AWS loT Core to receive the sensor data. Create and configure a custom domain to connect to AWS loT Core. Update the DNS record in Route 53 to point to the AWS loT Core Data-ATS endpoint. Configure an AWS loT rule to store the data.

- C . Create a Network Load Balancer (NLB). Set the MQTT broker as the target. Create an AWS Global Accelerator accelerator. Set the NLB as the endpoint for the accelerator. Update the DNS record in Route 53 to a multivalue answer record. Set the Global Accelerator IP addresses as values. Use the MQTT broker to store the data.

- D . Set up AWS loT Greengrass to receive the sensor data. Update the DNS record in Route 53 to point to the AWS loT Greengrass endpoint. Configure an AWS loT rule to invoke an AWS Lambda function to store the data.

A

Explanation:

it describes a solution that uses an Application Load Balancer (ALB) and an Auto Scaling group for the MQTT broker. The ALB distributes incoming traffic across the instances in the Auto Scaling group and allows for automatic scaling based on incoming traffic. The use of an alias record in Route 53 allows for easy updates to the DNS record without changing the IP address. This solution improves the reliability of the MQTT broker by allowing it to automatically scale based on incoming traffic, reducing the likelihood of lost data due to broker overload.

Reference:

https://aws.amazon.com/elasticloadbalancing/applicationloadbalancer/

https://aws.amazon.com/autoscaling/ https://aws.amazon.com/route53/

A company runs an application in an on-premises data center. The application gives users the ability to upload media files. The files persist in a file server. The web application has many users. The application server is overutilized, which causes data uploads to fail occasionally. The company frequently adds new storage to the file server. The company wants to resolve these challenges by migrating the application to AWS.

Users from across the United States and Canada access the application. Only authenticated users should have the ability to access the application to upload files. The company will consider a solution that refactors the application, and the company needs to accelerate application development.

Which solution will meet these requirements with the LEAST operational overhead?

- A . Use AWS Application Migration Service to migrate the application server to Amazon EC2 instances. Create an Auto Scaling group for the EC2 instances. Use an Application Load Balancer to distribute the requests. Modify the application to use Amazon S3 to persist the files. Use Amazon Cognito to authenticate users.

- B . Use AWS Application Migration Service to migrate the application server to Amazon EC2 instances. Create an Auto Scaling group for the EC2 instances. Use an Application Load Balancer to distribute the requests. Set up AWS IAM Identity Center (AWS Single Sign-On) to give users the ability to sign in to the application. Modify the application to use Amazon S3 to persist the files.

- C . Create a static website for uploads of media files. Store the static assets in Amazon S3. Use AWS AppSync to create an API. Use AWS Lambda resolvers to upload the media files to Amazon S3. Use Amazon Cognito to authenticate users.

- D . Use AWS Amplify to create a static website for uploads of media files. Use Amplify Hosting to serve the website through Amazon CloudFront. Use Amazon S3 to store the uploaded media files. Use Amazon Cognito to authenticate users.

D

Explanation:

The company should use AWS Amplify to create a static website for uploads of media files. The company should use Amplify Hosting to serve the website through Amazon CloudFront. The company should use Amazon S3 to store the uploaded media files. The company should use Amazon Cognito to authenticate users. This solution will meet the requirements with the least operational overhead because AWS Amplify is a complete solution that lets frontend web and mobile developers easily build, ship, and host full-stack applications on AWS, with the flexibility to leverage the breadth of AWS services as use cases evolve. No cloud expertise needed1. By using AWS Amplify, the company can refactor the application to a serverless architecture that reduces operational complexity and costs. AWS Amplify offers the following features and benefits:

Amplify Studio: A visual interface that enables you to build and deploy a full-stack app quickly, including frontend UI and backend.

Amplify CLI: A local toolchain that enables you to configure and manage an app backend with just a few commands.

Amplify Libraries: Open-source client libraries that enable you to build cloud-powered mobile and web apps.

Amplify UI Components: Open-source design system with cloud-connected components for building feature-rich apps fast.

Amplify Hosting: Fully managed CI/CD and hosting for fast, secure, and reliable static and server-side rendered apps.

By using AWS Amplify to create a static website for uploads of media files, the company can leverage Amplify Studio to visually build a pixel-perfect UI and connect it to a cloud backend in clicks. By using Amplify Hosting to serve the website through Amazon CloudFront, the company can easily deploy its web app or website to the fast, secure, and reliable AWS content delivery network (CDN), with hundreds of points of presence globally. By using Amazon S3 to store the uploaded media files, the company can benefit from a highly scalable, durable, and cost-effective object storage service that can handle any amount of data2. By using Amazon Cognito to authenticate users, the company can add user sign-up, sign-in, and access control to its web app with a fully managed service that scales to support millions of users3.

The other options are not correct because:

Using AWS Application Migration Service to migrate the application server to Amazon EC2 instances would not refactor the application or accelerate development. AWS Application Migration Service (AWS MGN) is a service that enables you to migrate physical servers, virtual machines (VMs), or cloud servers from any source infrastructure to AWS without requiring agents or specialized tools. However, this would not address the challenges of overutilization and data uploads failures. It would also not reduce operational overhead or costs compared to a serverless architecture.

Creating a static website for uploads of media files and using AWS AppSync to create an API would not be as simple or fast as using AWS Amplify. AWS AppSync is a service that enables you to create flexible APIs for securely accessing, manipulating, and combining data from one or more data sources. However, this would require more configuration and management than using Amplify Studio and Amplify Hosting. It would also not provide authentication features like Amazon Cognito.

Setting up AWS IAM Identity Center (AWS Single Sign-On) to give users the ability to sign in to the application would not be as suitable as using Amazon Cognito. AWS Single Sign-On (AWS SSO) is a service that enables you to centrally manage SSO access and user permissions across multiple AWS accounts and business applications. However, this service is designed for enterprise customers who need to manage access for employees or partners across multiple resources. It is not intended for authenticating end users of web or mobile apps.

Reference:

https://aws.amazon.com/amplify/

https://aws.amazon.com/s3/

https://aws.amazon.com/cognito/

https://aws.amazon.com/mgn/

https://aws.amazon.com/appsync/

https://aws.amazon.com/single-sign-on/

A company hosts an ecommerce site using EC2, ALB, and DynamoDB in one AWS Region. The site uses a custom domain in Route 53. The company wants to replicate the stack to a second Regionfordisaster recoveryandfaster accessfor global customers.

What should the architect do?

- A . Use CloudFormation to deploy to the second Region. Use Route 53 latency-based routing. Enable global tables in DynamoDB.

- B . Use the console to recreate the infra manually in the second Region. Use weighted routing.

- C . Replicate only the S3 and DynamoDB data. Use Route 53 failover routing.

- D . Use Beanstalk and DynamoDB Streams for replication. Use latency-based routing.

A

Explanation:

A is correct because:

CloudFormation templatesenable repeatable infrastructure deployment.

Route 53 latency-based routingensures users hit the closest Region.

DynamoDB global tablesallow multi-Region, active-active replication of application data.

Manual console work (B) is not scalable.

C lacks EC2/ALB replication.

D adds unnecessary services like Beanstalk and doesn’t scale cleanly.

Reference: https://docs.aws.amazon.com/amazondynamodb/latest/developerguide/GlobalTables.ht ml

A company is migrating mobile banking applications to run on Amazon EC2 instances in a VPC. Backend service applications run in an on-premises data center. The data center has an AWS Direct

Connect connection into AWS. The applications that run in the VPC need to resolve DNS requests to an on-premises Active Directory domain that runs in the data center.

Which solution will meet these requirements with the LEAST administrative overhead?

- A . Provision a set of EC2 instances across two Availability Zones in the VPC as caching DNS servers to resolve DNS queries from the application servers within the VPC.

- B . Provision an Amazon Route 53 private hosted zone. Configure NS records that point to on-premises DNS servers.

- C . Create DNS endpoints by using Amazon Route 53 Resolver Add conditional forwarding rules to resolve DNS namespaces between the on-premises data center and the VPC.

- D . Provision a new Active Directory domain controller in the VPC with a bidirectional trust between this new domain and the on-premises Active Directory domain.