Practice Free DOP-C02 Exam Online Questions

A company runs an application on an Amazon Elastic Container Service (Amazon ECS) service by using the AWS Fargate launch type. The application consumes messages from an Amazon Simple Queue Service (Amazon SQS) queue. The application can take several minutes to process each message from the queue. When the application processes a message, the application reads a file from an Amazon S3 bucket and processes the data in the file. The application writes the processed output to a second S3 bucket. The company uses Amazon CloudWatch Logs to monitor processing errors and to ensure that the application processes messages successfully.

The SQS queue typically receives a low volume of messages. However, occasionally the queue receives higher volumes of messages. A DevOps engineer needs to implement a solution to reduce the processing time of message bursts.

Which solution will meet this requirement in the MOST cost-effective way?

- A . Register the ECS service as a scalable target in AWS Application Auto Scaling. Configure a target tracking scaling policy to scale the service in response to the queue size.

- B . Increase the maximum number of messages that Amazon SQS requests to batch messages together. Use long polling to minimize the number of API calls to Amazon SQS during periods of low traffic.

- C . Send messages to an Amazon EventBridge event bus instead of the SQS queue. Replace the ECS service with an EventBridge rule that launches ECS tasks in response to matching events.

- D . Create an Auto Scaling group of EC2 instances. Create a capacity provider in the ECS cluster by using the Auto Scaling group. Change the ECS service to use the EC2 launch type.

A

Explanation:

Comprehensive and Detailed Explanation From Exact Extract of DevOps Engineer Documents Only:

AWS recommends Application Auto Scaling for ECS services to dynamically adjust the number of running tasks based on Amazon SQS queue metrics such as ApproximateNumberOfMessagesVisible. By using target tracking scaling policies, ECS on Fargate scales automatically when the queue backlog grows and scales down when traffic decreases ― a fully managed, cost-efficient solution (see ECS Service Auto Scaling Developer Guide).

34 1. A company uses the AWS Cloud Development Kit (AWS CDK) to define its application. The company uses a pipeline that consists of AWS CodePipeline and AWS CodeBuild to deploy the CDK application. The company wants to introduce unit tests to the pipeline to test various infrastructure components. The company wants to ensure that a deployment proceeds if no unit tests result in a failure.

Which combination of steps will enforce the testing requirement in the pipeline? (Select TWO.)

A DevOps engineer needs to implement a solution to install antivirus software on all the Amazon EC2 instances in an AWS account. The EC2 instances run the most recent version of Amazon Linux.

The solution must detect all instances and must use an AWS Systems Manager document to install the software if the software is not present.

Which solution will meet these requirements?

- A . Create an association in Systems Manager State Manager. Target all the managed nodes. Include the software in the association. Configure the association to use the Systems Manager document.

- B . Set up AWS Config to record all the resources in the account. Create an AWS Config custom rule to determine if the software is installed on all the EC2 instances. Configure an automatic remediation action that uses the Systems Manager document for noncompliant EC2 instances.

- C . Activate Amazon EC2 scanning on Amazon Inspector to determine if the software is installed on all the EC2 instances. Associate the findings with the Systems Manager document.

- D . Create an Amazon EventBridge rule that uses AWS CloudTrail to detect the RunInstances API call. Configure inventory collection in Systems Manager Inventory to determine if the software is installed on the EC2 instances. Associate the Systems Manager Inventory with the Systems Manager document.

A

Explanation:

Option A best matches the requirement in the simplest, most direct way:

Systems Manager State Manager is designed to apply and maintain a desired state on managed instances by running associations on a schedule or continuously across targets (for example, “all managed nodes”).

By using a Systems Manager document in the association that installs the antivirus package (and can be written to be idempotent: “install only if not present”), State Manager both detects drift (software missing) and remediates it automatically by reapplying the desired configuration.

This approach automatically covers all instances that are managed by Systems Manager (which is typically the standard requirement for fleet management on Amazon Linux).

Why the others are more overhead or not as direct:

B can work, but it requires building and operating a custom Config rule plus remediation wiring. That’s more components and maintenance than State Manager for a straightforward “ensure software is installed” task.

C Amazon Inspector is a vulnerability management service; it’s not the primary tool for “ensure a specific software package is installed everywhere” and “remediate via SSM doc” as a desired-state control.

D only detects new instances at launch time via CloudTrail RunInstances, and then you still need Inventory correlation logic. It’s more complex and can miss already-running instances unless additional logic is added.

A company is using an AWS CodeBuild project to build and package an application. The packages are copied to a shared Amazon S3 bucket before being deployed across multiple AWS accounts.

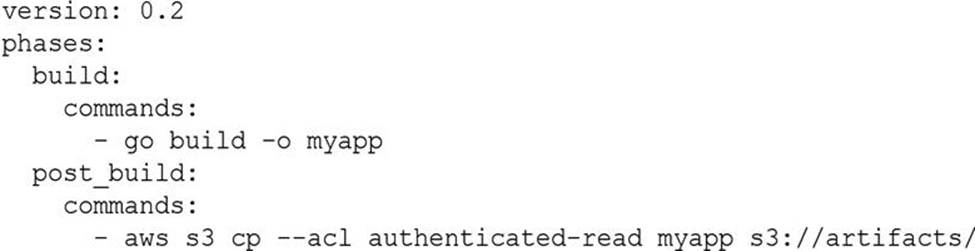

The buildspec.yml file contains the following:

The DevOps engineer has noticed that anybody with an AWS account is able to download the artifacts.

What steps should the DevOps engineer take to stop this?

- A . Modify the post_build command to use –acl public-read and configure a bucket policy that grants read access to the relevant AWS accounts only.

- B . Configure a default ACL for the S3 bucket that defines the set of authenticated users as the relevant AWS accounts only and grants read-only access.

- C . Create an S3 bucket policy that grants read access to the relevant AWS accounts and denies read access to the principal “*”.

- D . Modify the post_build command to remove –acl authenticated-read and configure a bucket policy that allows read access to the relevant AWS accounts only.

A SaaS company uses ECS (Fargate) behind an ALB and CodePipeline + CodeDeploy for blue/green deployments. They need automatic, incremental traffic shifting over time with no downtime.

Which solution will meet these requirements?

- A . Use TimeBasedLinear in appspec.yaml with defined percentage and interval.

- B . Use AllAtOnce deployment configuration.

- C . Use TimeBasedCanary.

- D . Configure weighted routing on ALB manually.

A

Explanation:

CodeDeploy supports TimeBasedLinear traffic shifting for ECS blue/green deployments. Traffic increments by linearPercentage every linearInterval until 100%. This provides zero-downtime gradual rollout ― as per CodeDeploy ECS Blue/Green Traffic Shifting documentation.

36 1. A company uses Amazon RDS for Microsoft SQL Server as its primary database and must ensure cross-Region high availability with RPO < 1 min and RTO < 10 min.

Which solution meets these requirements?

A company uses an organization in AWS Organizations to manage multiple AWS accounts. The company’s internal auditors have administrative access to a single audit account within the organization. A DevOps engineer needs to provide a solution to give the auditors read-only access to all accounts within the organization, including new accounts created in the future.

Which solution will meet these requirements?

- A . Enable AWS IAM Identity Center for the organization. Create a read-only access permission set. Create a permission group that includes the auditors. Grant access to every account in the organization to the auditor permission group by using the read-only access permission set.

- B . Create an AWS CloudFormation stack set to deploy an IAM role that trusts the audit account and allows read-only access. Enable automatic deployment for the stack set. Set the organization root as a deployment target.

- C . Create an SCP that provides read-only access for users in the audit account. Apply the policy to the organization root.

- D . Enable AWS Config in the organization management account. Create an AWS managed rule to check for a role in each account that trusts the audit account and allows read-only access. Enable automated remediation to create the role if it does not exist.

A company is building a web application on AWS. The application uses AWS Code Connections to access a Git repository. The company sets up a pipeline in AWS CodePipeline that automatically builds and deploys the application to a staging environment when the company pushes code to the main branch. Bugs and integration issues sometimes occur in the main branch because there is no automated testing integrated into the pipeline.

The company wants to automatically run tests when code merges occur in the Git repository and to prevent deployments from reaching the staging environment if any test fails. Tests can run up to 20 minutes.

Which solution will meet these requirements?

- A . Add an AWS CodeBuild action to the pipeline. Add a buildspec.yml file to the Git repository to define commands to run tests. Configure the pipeline to stop the deployment if a test fails.

- B . Configure Git webhooks to initiate an AWS Lambda function during each code merge. Configure the Lambda function to run tests programmatically and to stop the pipeline if a test fails.

- C . Configure AWS Batch to use Docker images of test environments. Integrate AWS Batch into the pipeline. Add an AWS Lambda function to the pipeline that submits the batch jobs and reverts the code merge if a test fails.

- D . Configure the Git repository to push code to an Amazon S3 bucket during each code merge. Use S3 Event Notifications to initiate tests and to revert the code merge if a test fails.

A

Explanation:

AWS CodePipeline supports multiple stages including source, build, test, and deploy. The most efficient way to integrate automated testing is by adding an AWS CodeBuild action to the pipeline that runs the tests using a buildspec.yml file. CodeBuild can be configured to fail the pipeline automatically if tests fail, ensuring that deployments do not proceed to the staging environment. This pattern is directly supported and documented in AWS CodePipeline + CodeBuild CI/CD architecture guidance.

33 1. A company wants to build a pipeline to update the standard AMI monthly. The AMI must be updated to use the most recent patches to ensure that launched Amazon EC2 instances are up to date. Each new AMI must be available to all AWS accounts in the company’s organization in AWS Organizations.

The company needs to configure an automated pipeline to build the AMI.

Which solution will meet these requirements with the MOST operational efficiency?

A company has a continuous integration pipeline where the company creates container images by using AWS CodeBuild. The created images are stored in Amazon Elastic Container Registry (Amazon ECR). Checking for and fixing the vulnerabilities in the images takes the company too much time. The company wants to identify the image vulnerabilities quickly and notify the security team of the vulnerabilities.

Which combination of steps will meet these requirements with the LEAST operational overhead? (Select TWO.)

- A . Activate Amazon Inspector enhanced scanning for Amazon ECR. Configure the enhanced scanning to use continuous scanning. Set up a topic in Amazon Simple Notification Service (Amazon SNS).

- B . Create an Amazon EventBridge rule for Amazon Inspector findings. Set an Amazon Simple Notification Service (Amazon SNS) topic as the rule target.

- C . Activate AWS Lambda enhanced scanning for Amazon ECR. Configure the enhanced scanning to use continuous scanning. Set up a topic in Amazon Simple Email Service (Amazon SES).

- D . Create a new AWS Lambda function. Invoke the new Lambda function when scan findings are detected.

- E . Activate default basic scanning for Amazon ECR for all container images. Configure the default basic scanning to use continuous scanning. Set up a topic in Amazon Simple Notification Service (Amazon SNS).

A company provides an application to customers. The application has an Amazon API Gateway REST API that invokes an AWS Lambda function. On initialization, the Lambda function loads a large amount of data from an Amazon DynamoDB table. The data load process results in long cold-start times of 8-10 seconds. The DynamoDB table has DynamoDB Accelerator (DAX) configured.

Customers report that the application intermittently takes a long time to respond to requests. The application receives thousands of requests throughout the day. In the middle of the day, the application experiences 10 times more requests than at any other time of the day. Near the end of the day, the application’s request volume decreases to 10% of its normal total.

A DevOps engineer needs to reduce the latency of the Lambda function at all times of the day.

Which solution will meet these requirements?

- A . Configure provisioned concurrency on the Lambda function with a concurrency value of 1. Delete the DAX cluster for the DynamoDB table.

- B . Configure reserved concurrency on the Lambda function with a concurrency value of 0.

- C . Configure provisioned concurrency on the Lambda function. Configure AWS Application Auto Scaling on the Lambda function with provisioned concurrency values set to a minimum of 1 and a maximum of 100.

- D . Configure reserved concurrency on the Lambda function. Configure AWS Application Auto Scaling on the API Gateway API with a reserved concurrency maximum value of 100.

A company needs to implement failover for its application. The application includes an Amazon CloudFront distribution and a public Application Load Balancer (ALB) in an AWS Region. The company has configured the ALB as the default origin for the distribution.

After some recent application outages, the company wants a zero-second RTO. The company deploys the application to a secondary Region in a warm standby configuration. A DevOps engineer needs to automate the failover of the application to the secondary Region so that HTTP GET requests meet the desired RTO.

Which solution will meet these requirements?

- A . Create a second CloudFront distribution that has the secondary ALB as the default origin. Create Amazon Route 53 alias records that have a failover policy and Evaluate Target Health set to Yes for both CloudFront distributions. Update the application to use the new record set.

- B . Create a new origin on the distribution for the secondary ALB. Create a new origin group. Set the original ALB as the primary origin. Configure the origin group to fail over for HTTP 5xx status codes. Update the default behavior to use the origin group.

- C . Create Amazon Route 53 alias records that have a failover policy and Evaluate Target Health set to Yes for both ALBs. Set the TTL of both records to O. Update the distribution’s origin to use the new record set.

- D . Create a CloudFront function that detects HTTP 5xx status codes. Configure the function to return a 307 Temporary Redirect error response to the secondary ALB if the function detects 5xx status codes. Update the distribution’s default behavior to send origin responses to the function.

B

Explanation:

To implement failover for the application to the secondary Region so that HTTP GET requests meet the desired RTO, the DevOps engineer should use the following solution:

Create a new origin on the distribution for the secondary ALB. A CloudFront origin is the source of the content that CloudFront delivers to viewers. By creating a new origin for the secondary ALB, the DevOps engineer can configure CloudFront to route traffic to the secondary Region when the primary Region is unavailable1

Create a new origin group. Set the original ALB as the primary origin. Configure the origin group to fail over for HTTP 5xx status codes. An origin group is a logical grouping of two origins: a primary origin and a secondary origin. By creating an origin group, the DevOps engineer can specify which origin CloudFront should use as a fallback when the primary origin fails. The DevOps engineer can also define which HTTP status codes should trigger a failover from the primary origin to the secondary origin. By setting the original ALB as the primary origin and configuring the origin group to fail over for HTTP 5xx status codes, the DevOps engineer can ensure that CloudFront will switch to the secondary ALB when the primary ALB returns server errors2

Update the default behavior to use the origin group. A behavior is a set of rules that CloudFront applies when it receives requests for specific URLs or file types. The default behavior applies to all requests that do not match any other behaviors. By updating the default behavior to use the origin group, the DevOps engineer can enable failover routing for all requests that are sent to the distribution3

This solution will meet the requirements because it will automate the failover of the application to the secondary Region with zero-second RTO. When CloudFront receives an HTTP GET request, it will first try to route it to the primary ALB in the primary Region. If the primary ALB is healthy and returns a successful response, CloudFront will deliver it to the viewer. If the primary ALB is unhealthy or returns an HTTP 5xx status code, CloudFront will automatically route the request to the secondary ALB in the secondary Region and deliver its response to the viewer.

The other options are not correct because they either do not provide zero-second RTO or do not work as expected. Creating a second CloudFront distribution that has the secondary ALB as the default origin and creating Amazon Route 53 alias records that have a failover policy is not a good option because it will introduce additional latency and complexity to the solution. Route 53 health checks and DNS propagation can take several minutes or longer, which means that viewers might experience delays or errors when accessing the application during a failover event. Creating Amazon Route 53 alias records that have a failover policy and Evaluate Target Health set to Yes for both ALBs and setting the TTL of both records to O is not a valid option because it will not work with CloudFront distributions. Route 53 does not support health checks for alias records that point to CloudFront distributions, so it cannot detect if an ALB behind a distribution is healthy or not. Creating a CloudFront function that detects HTTP 5xx status codes and returns a 307 Temporary Redirect error response to the secondary ALB is not a valid option because it will not provide zero-second RTO. A 307 Temporary Redirect error response tells viewers to retry their requests with a different URL, which means that viewers will have to make an additional request and wait for another response from CloudFront before reaching the secondary ALB.

References:

1: Adding, Editing, and Deleting Origins – Amazon CloudFront

2: Configuring Origin Failover – Amazon CloudFront

3: Creating or Updating a Cache Behavior – Amazon CloudFront

A company is developing a web application’s infrastructure using AWS CloudFormation The database engineering team maintains the database resources in a Cloud Formation template, and the software development team maintains the web application resources in a separate CloudFormation template. As the scope of the application grows, the software development team needs to use resources maintained by the database engineering team However, both teams have their own review and lifecycle management processes that they want to keep. Both teams also require resource-level change-set reviews. The software development team would like to deploy changes to this template using their Cl/CD pipeline.

Which solution will meet these requirements?

- A . Create a stack export from the database CloudFormation template and import those references into the web application CloudFormation template

- B . Create a CloudFormation nested stack to make cross-stack resource references and parameters available in both stacks.

- C . Create a CloudFormation stack set to make cross-stack resource references and parameters available in both stacks.

- D . Create input parameters in the web application CloudFormation template and pass resource names and IDs from the database stack.

A

Explanation:

Stack Export and Import:

Use the Export feature in CloudFormation to share outputs from one stack (e.g., database resources) and use them as inputs in another stack (e.g., web application resources).

Steps to Create Stack Export:

Define the resources in the database CloudFormation template and use the Outputs section to export necessary values.

Outputs:

DBInstanceEndpoint:

Value: !GetAtt DBInstance.Endpoint.Address

Export:

Name: DBInstanceEndpoint

Steps to Import into Web Application Stack:

In the web application CloudFormation template, use the ImportValue function to import these exported values.

Resources:

MyResource:

Type: "AWS::SomeResourceType"

Properties:

SomeProperty: !ImportValue DBInstanceEndpoint

Resource-Level Change-Set Reviews:

Both teams can continue using their respective review processes, as changes to each stack are managed independently.

Use CloudFormation change sets to preview changes before deploying.

By exporting resources from the database stack and importing them into the web application stack, both teams can maintain their separate review and lifecycle management processes while sharing necessary resources.

Reference: AWS CloudFormation Export

AWS CloudFormation ImportValue