Practice Free DOP-C02 Exam Online Questions

A DevOps engineer has automated a web service deployment by using AWS CodePipeline with the following steps:

1) An AWS CodeBuild project compiles the deployment artifact and runs unit tests.

2) An AWS CodeDeploy deployment group deploys the web service to Amazon EC2 instances in the staging environment.

3) A CodeDeploy deployment group deploys the web service to EC2 instances in the production environment.

The quality assurance (QA) team requests permission to inspect the build artifact before the deployment to the production environment occurs. The QA team wants to run an internal penetration testing tool to conduct manual tests. The tool will be invoked by a REST API call.

Which combination of actions should the DevOps engineer take to fulfill this request? (Choose two.)

- A . Insert a manual approval action between the test actions and deployment actions of the pipeline.

- B . Modify the buildspec.yml file for the compilation stage to require manual approval before completion.

- C . Update the CodeDeploy deployment groups so that they require manual approval to proceed.

- D . Update the pipeline to directly call the REST API for the penetration testing tool.

- E . Update the pipeline to invoke an AWS Lambda function that calls the REST API for the penetration testing tool.

A company is using the AWS Cloud Development Kit (AWS CDK) to develop a microservices-based application. The company needs to create reusable infrastructure components for three environments: development, staging, and production. The components must include networking resources, database resources, and serverless compute resources.

The company must implement a solution that provides consistent infrastructure across environments while offering the option for environment-specific customizations. The solution also must minimize code duplication.

Which solution will meet these requirements with the LEAST development overhead?

- A . Create custom Level 1 (L1) constructs out of Level 2 (L2) constructs where repeatable patterns exist. Create a single set of deployment stacks that takes the environment name as an argument upon instantiation. Deploy CDK applications for each environment.

- B . Create custom Level 1 (L1) constructs out of Level 2 (L2) constructs where repeatable patterns exist. Create separate deployment stacks for each environment. Use the CDK context command to determine which stacks to run when deploying to each environment.

- C . Create custom Level 3 (L3) constructs out of Level 2 (L2) constructs where repeatable patterns exist. Create a single set of deployment stacks that takes the environment name as an argument upon instantiation. Deploy CDK applications for each environment.

- D . Create custom Level 3 (L3) constructs out of Level 2 (L2) constructs where repeatable patterns exist. Create separate deployment stacks for each environment. Use the CDK context command to determine which stacks to run when deploying to each environment.

C

Explanation:

Comprehensive and Detailed Explanation From Exact Extract of DevOps Engineer Documents Only:

AWS CDK recommends using Level 3 (L3) custom constructs to encapsulate reusable multi-resource infrastructure patterns (networking, compute, DB). Then, define a single set of environment-aware

stacks that accept environment parameters for deployment. This ensures consistency with minimal code duplication, per AWS CDK best practices and design patterns whitepaper.

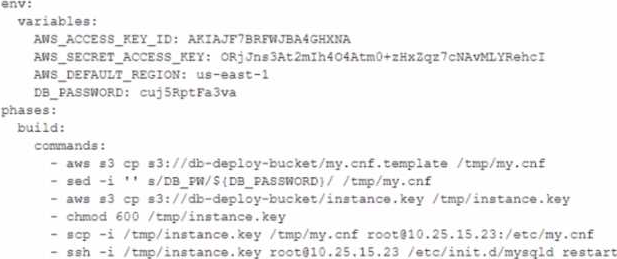

A DevOps engineer is working on a project that is hosted on Amazon Linux and has failed a security review. The DevOps manager has been asked to review the company buildspec. yaml die for an AWS

CodeBuild project and provide recommendations.

The buildspec. yaml file is configured as follows:

What changes should be recommended to comply with AWS security best practices? (Select THREE.)

- A . Add a post-build command to remove the temporary files from the container before termination to ensure they cannot be seen by other CodeBuild users.

- B . Update the CodeBuild project role with the necessary permissions and then remove the AWS credentials from the environment variable.

- C . Store the db_password as a SecureString value in AWS Systems Manager Parameter Store and then remove the db_password from the environment variables.

- D . Move the environment variables to the ‘db.-deploy-bucket ‘Amazon S3 bucket, add a prebuild stage to download then export the variables.

- E . Use AWS Systems Manager run command versus sec and ssh commands directly to the instance.

BCE

Explanation:

B. Update the CodeBuild project role with the necessary permissions and then remove the AWS credentials from the environment variable.

C. Store the DB_PASSWORD as a SecureString value in AWS Systems Manager Parameter Store and then remove the DB_PASSWORD from the environment variables.

E. Use AWS Systems Manager run command versus scp and ssh commands directly to the instance.

A DevOps engineer uses AWS CodeBuild to frequently produce software packages. The CodeBuild project builds large Docker images that the DevOps engineer can use across multiple builds. The DevOps engineer wants to improve build performance and minimize costs.

Which solution will meet these requirements?

- A . Store the Docker images in an Amazon Elastic Container Registry (Amazon ECR) repository.

Implement a local Docker layer cache for CodeBuild. - B . Cache the Docker images in an Amazon S3 bucket that is available across multiple build hosts.

Expire the cache by using an S3 Lifecycle policy. - C . Store the Docker images in an Amazon Elastic Container Registry (Amazon ECR) repository. Modify the CodeBuild project runtime configuration to always use the most recent image version.

- D . Create custom AMIs that contain the cached Docker images. In the CodeBuild build, launch Amazon EC2 instances from the custom AMIs.

A

Explanation:

Step 1: Storing Docker Images in Amazon ECR

Docker images can be large, and storing them in a centralized, scalable location can greatly reduce

build times. Amazon Elastic Container Registry (ECR) is a fully managed container registry that stores,

manages, and deploys Docker container images.

Action: Store the Docker images in an ECR repository.

Why: Storing Docker images in ECR ensures that Docker images can be reused across multiple builds, improving build performance by avoiding the need to rebuild the images from scratch.

Reference: AWS documentation on Amazon ECR.

Step 2: Implementing Docker Layer Caching in CodeBuild

Docker layer caching is essential for improving performance in continuous integration pipelines. CodeBuild supports local caching of Docker layers, which speeds up builds that reuse Docker images across multiple runs.

Action: Implement Docker layer caching within the CodeBuild project.

Why: This improves performance by allowing frequently used Docker layers to be cached locally, avoiding the need to pull or build the layers every time.

Reference: AWS documentation on Docker Layer Caching in CodeBuild.

This corresponds to Option A: Store the Docker images in an Amazon Elastic Container Registry (Amazon ECR) repository. Implement a local Docker layer cache for CodeBuild.

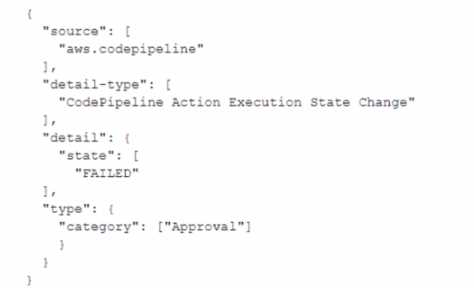

A company is implementing AWS CodePipeline to automate its testing process.

The company wants to be notified when the execution state fails and used the following custom event pattern in Amazon EventBridge:

Which type of events will match this event pattern?

- A . Failed deploy and build actions across all the pipelines

- B . All rejected or failed approval actions across all the pipelines

- C . All the events across all pipelines

- D . Approval actions across all the pipelines

B

Explanation:

Action-level states in events

Action state Description

STARTED The action is currently running.

SUCCEEDED The action was completed successfully.

FAILED For Approval actions, the FAILED state means the action was either rejected by the reviewer or failed due to an incorrect action configuration.

CANCELED The action was canceled because the pipeline structure was updated.

A company has deployed a new REST API by using Amazon API Gateway. The company uses the API to access confidential data. The API must be accessed from only specific VPCs in the company.

Which solution will meet these requirements?

- A . Create and attach a resource policy to the API Gateway API. Configure the resource policy to allow only the specific VPC IDs.

- B . Add a security group to the API Gateway API. Configure the inbound rules to allow only the specific VPC IP address ranges.

- C . Create and attach an IAM role to the API Gateway API. Configure the IAM role to allow only the specific VPC IDs.

- D . Add an ACL to the API Gateway API. Configure the outbound rules to allow only the specific VPC IP address ranges.

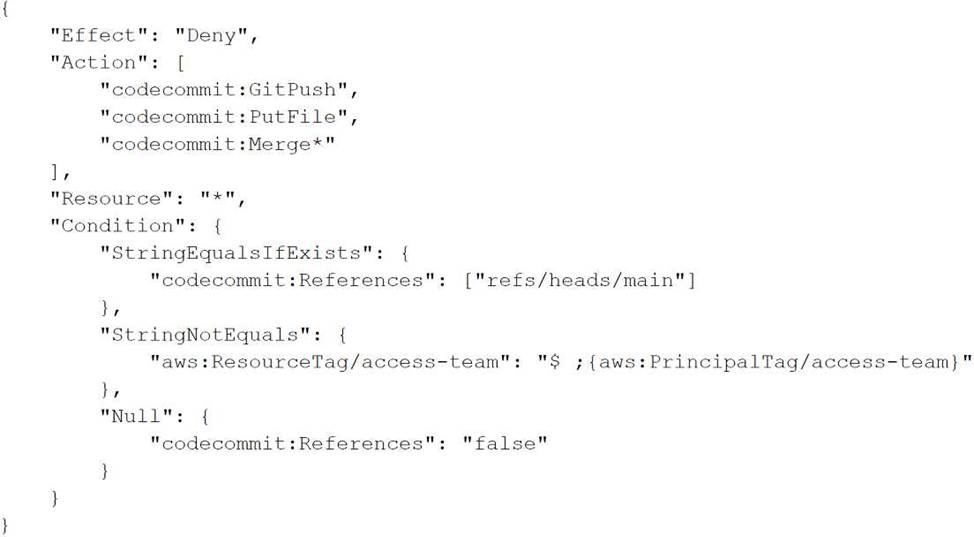

A company’s application teams use AWS CodeCommit repositories for their applications. The application teams have repositories in multiple AWS accounts. All accounts are in an organization in AWS Organizations.

Each application team uses AWS IAM Identity Center (AWS Single Sign-On) configured with an external IdP to assume a developer IAM role. The developer role allows the application teams to use Git to work with the code in the repositories.

A security audit reveals that the application teams can modify the main branch in any repository. A DevOps engineer must implement a solution that

allows the application teams to modify the main branch of only the repositories that they manage.

Which combination of steps will meet these requirements? (Select THREE.)

- A . Update the SAML assertion to pass the user’s team name. Update the IAM role’s trust policy to add an access-team session tag that has the team name.

- B . Create an approval rule template for each team in the Organizations management account.

Associate the template with all the repositories. Add the developer role ARN as an approver. - C . Create an approval rule template for each account. Associate the template with all repositories. Add the "aws:ResourceTag/access-team":"$ ;{aws:PrincipaITag/access-team}" condition to the approval rule template.

- D . For each CodeCommit repository, add an access-team tag that has the value set to the name of the associated team.

- E . Attach an SCP to the accounts. Include the following statement:

- F . Create an IAM permissions boundary in each account. Include the following statement:

ADE

Explanation:

Option A: Update the SAML assertion to pass the user’s team name. This can be done within the identity provider’s configuration. Update the IAM role’s trust policy to include an access-team session tag that carries the team name. This tag will be used to control access based on the team association.

Option D: For each AWS CodeCommit repository, add an access-team tag that has the value set to the name of the team that manages it. This tagging strategy will be crucial for access control, as it allows you to set conditions based on these tags.

Option E: Attach a Service Control Policy (SCP) to the accounts. The SCP should include a statement like the one provided in the images uploaded, which denies actions such as GitPush, PutFile, and Merge* unless the condition that matches the access-team tag with the session tag is met. This policy prevents users from modifying the main branch unless their access-team tag matches the repository’s access-team tag.

A company containerized its Java app and uses CodePipeline. They want to scan images in ECR for vulnerabilities and reject images with critical vulnerabilities in a manual approval stage.

Which solution meets these?

- A . Basic scanning with EventBridge for Inspector findings and Lambda to reject manual approval if critical vulnerabilities found.

- B . Enhanced scanning, Lambda invokes Inspector for SBOM, exports to S3, Athena queries SBOM, rejects manual approval on critical findings.

- C . Enhanced scanning, EventBridge listens to Detective scan findings, Lambda rejects manual approval on critical vulnerabilities.

- D . Enhanced scanning, EventBridge listens to Inspector scan findings, Lambda rejects manual approval on critical vulnerabilities.

D

Explanation:

Amazon ECR enhanced scanning uses Amazon Inspector for vulnerability detection.

EventBridge can capture Inspector scan findings.

Lambda can process scan findings and reject manual approval if critical vulnerabilities exist.

Options A and C use incorrect or less integrated services (basic scanning or Detective).

Option B adds unnecessary complexity with SBOM and Athena.

References:

Amazon ECR Image Scanning

Integrating ECR Scanning with CodePipeline

32 1. A DevOps engineer needs a resilient CI/CD pipeline that builds container images, stores them in ECR, scans images for vulnerabilities, and is resilient to outages in upstream source image repositories.

Which solution meets this?

A DevOps engineer at a company is supporting an AWS environment in which all users use AWS IAM Identity Center (AWS Single Sign-On). The company wants to immediately disable credentials of any new IAM user and wants the security team to receive a notification.

Which combination of steps should the DevOps engineer take to meet these requirements? (Choose three.)

- A . Create an Amazon EventBridge rule that reacts to an IAM CreateUser API call in AWS CloudTrail.

- B . Create an Amazon EventBridge rule that reacts to an IAM GetLoginProfile API call in AWS CloudTrail.

- C . Create an AWS Lambda function that is a target of the EventBridge rule. Configure the Lambda function to disable any access keys and delete the login profiles that are associated with the IAM user.

- D . Create an AWS Lambda function that is a target of the EventBridge rule. Configure the Lambda function to delete the login profiles that are associated with the IAM user.

- E . Create an Amazon Simple Notification Service (Amazon SNS) topic that is a target of the EventBridge rule. Subscribe the security team’s group email address to the topic.

- F . Create an Amazon Simple Queue Service (Amazon SQS) queue that is a target of the Lambda function. Subscribe the security team’s group email address to the queue.

A company runs a web application that extends across multiple Availability Zones. The company uses an Application Load Balancer (ALB) for routing. AWS Fargate (or the application and Amazon Aurora for the application data The company uses AWS CloudFormation templates to deploy the application The company stores all Docker images in an Amazon Elastic Container Registry (Amazon ECR) repository in the same AWS account and AWS Region.

A DevOps engineer needs to establish a disaster recovery (DR) process in another Region. The solution must meet an RPO of 8 hours and an RTO of 2 hours The company sometimes needs more than 2 hours to build the Docker images from the Dockerfile

Which solution will meet the RTO and RPO requirements MOST cost-effectively?

- A . Copy the CloudFormation templates and the Dockerfile to an Amazon S3 bucket in the DR Region Use AWS Backup to configure automated Aurora cross-Region hourly snapshots In case of DR, build the most recent Docker image and upload the Docker image to an ECR repository in the DR Region Use the CloudFormation template that has the most recent Aurora snapshot and the Docker image from the ECR repository to launch a new CloudFormation stack in the DR Region Update the application DNS records to point to the new ALB

- B . Copy the CloudFormation templates to an Amazon S3 bucket in the DR Region Configure Aurora automated backup Cross-Region Replication Configure ECR Cross-Region Replication. In case of DR use the CloudFormation template with the most recent Aurora snapshot and the Docker image from the local ECR repository to launch a new CloudFormation stack in the DR Region Update the application DNS records to point to the new ALB

- C . Copy the CloudFormation templates to an Amazon S3 bucket in the DR Region. Use Amazon EventBridge to schedule an AWS Lambda function to take an hourly snapshot of the Aurora database and of the most recent Docker image in the ECR repository. Copy the snapshot and the Docker image to the DR Region in case of DR, use the CloudFormation template with the most recent Aurora snapshot and the Docker image from the local ECR repository to launch a new CloudFormation stack in the DR Region

- D . Copy the CloudFormation templates to an Amazon S3 bucket in the DR Region. Deploy a second application CloudFormation stack in the DR Region. Reconfigure Aurora to be a global database Update both CloudFormation stacks when a new application release in the current Region is needed. In case of DR. update, the application DNS records to point to the new ALB.

B

Explanation:

The most cost-effective solution to meet the RTO and RPO requirements is option B. This option involves copying the CloudFormation templates to an Amazon S3 bucket in the DR Region, configuring Aurora automated backup Cross-Region Replication, and configuring ECR Cross-Region Replication. In the event of a disaster, the CloudFormation template with the most recent Aurora snapshot and the Docker image from the local ECR repository can be used to launch a new CloudFormation stack in the DR Region. This approach avoids the need to build Docker images from the Dockerfile, which can sometimes take more than 2 hours, thus meeting the RTO requirement. Additionally, the use of automated backups and replication ensures that the RPO of 8 hours is met.

Reference: AWS Documentation on Disaster Recovery: Plan for Disaster Recovery (DR) – Reliability Pillar AWS Blog on Establishing RPO and RTO Targets: Establishing RPO and RTO Targets for Cloud Applications

AWS Documentation on ECR Cross-Region Replication: Amazon ECR Cross-Region Replication AWS Documentation on Aurora Cross-Region Replication: Replicating Amazon Aurora DB Clusters Across AWS Regions