Practice Free DOP-C02 Exam Online Questions

A development team manually builds an artifact locally and then places it in an Amazon S3 bucket. The application has a local cache that must be cleared when a deployment occurs. The team runs a command to do this downloads the artifact from Amazon S3 and unzips the artifact to complete the deployment.

A DevOps team wants to migrate to a CI/CD process and build in checks to stop and roll back the deployment when a failure occurs. This requires the team to track the progression of the deployment.

Which combination of actions will accomplish this? (Select THREE)

- A . Allow developers to check the code into a code repository Using Amazon EventBridge on every pull into the mam branch invoke an AWS Lambda function to build the artifact and store it in Amazon S3.

- B . Create a custom script to clear the cache Specify the script in the Beforelnstall lifecycle hook in the AppSpec file.

- C . Create user data for each Amazon EC2 instance that contains the clear cache script Once deployed test the application If it is not successful deploy it again.

- D . Set up AWS CodePipeline to deploy the application Allow developers to check the code into a code repository as a source tor the pipeline.

- E . Use AWS CodeBuild to build the artifact and place it in Amazon S3 Use AWS CodeDeploy to deploy the artifact to Amazon EC2 instances.

- F . Use AWS Systems Manager to fetch the artifact from Amazon S3 and deploy it to all the instances.

A company has multiple AWS accounts. The company uses AWS IAM Identity Center (AWS Single Sign-On) that is integrated with AWS Toolkit for Microsoft Azure DevOps. The attributes for access control feature is enabled in IAM Identity Center.

The attribute mapping list contains two entries. The department key is mapped to ${path:enterprise.department}. The costCenter key is mapped to ${path:enterprise.costCenter}.

All existing Amazon EC2 instances have a department tag that corresponds to three company departments (d1, d2, d3). A DevOps engineer must create policies based on the matching attributes. The policies must minimize administrative effort and must grant each Azure AD user access to only the EC2 instances that are tagged with the user’s respective department name.

Which condition key should the DevOps engineer include in the custom permissions policies to meet these requirements?

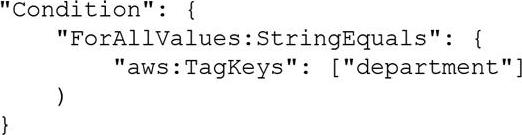

A)

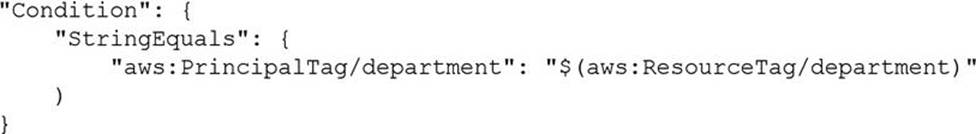

B)

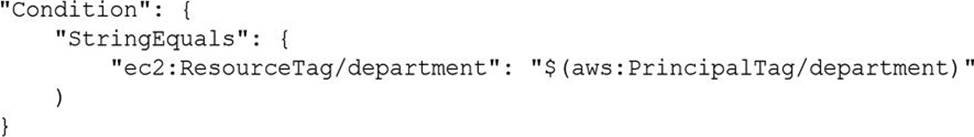

C)

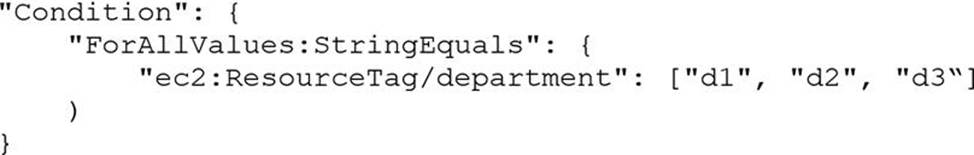

D)

- A . Option A

- B . Option B

- C . Option C

- D . Option D

A development team wants to use AWS CloudFormation stacks to deploy an application. However, the developer IAM role does not have the required permissions to provision the resources that are specified in the AWS CloudFormation template. A DevOps engineer needs to implement a solution that allows the developers to deploy the stacks. The solution must follow the principle of least privilege.

Which solution will meet these requirements?

- A . Create an IAM policy that allows the developers to provision the required resources. Attach the policy to the developer IAM role.

- B . Create an IAM policy that allows full access to AWS CloudFormation. Attach the policy to the developer IAM role.

- C . Create an AWS CloudFormation service role that has the required permissions. Grant the developer IAM role a cloudformation:* action. Use the new service role during stack deployments.

- D . Create an AWS CloudFormation service role that has the required permissions. Grant the developer IAM role the iam:PassRole permission. Use the new service role during stack deployments.

A company has configured an Amazon S3 event source on an AWS Lambda function The company needs the Lambda function to run when a new object is created or an existing object IS modified In a particular S3 bucket The Lambda function will use the S3 bucket name and the S3 object key of the incoming event to read the contents of the created or modified S3 object The Lambda function will parse the contents and save the parsed contents to an Amazon DynamoDB table.

The Lambda function’s execution role has permissions to read from the S3 bucket and to write to the DynamoDB table, During testing, a DevOps engineer discovers that the Lambda

function does not run when objects are added to the S3 bucket or when existing objects are modified.

Which solution will resolve this problem?

- A . Increase the memory of the Lambda function to give the function the ability to process large files from the S3 bucket.

- B . Create a resource policy on the Lambda function to grant Amazon S3 the permission to invoke the Lambda function for the S3 bucket

- C . Configure an Amazon Simple Queue Service (Amazon SQS) queue as an OnFailure destination for the Lambda function

- D . Provision space in the /tmp folder of the Lambda function to give the function the ability to process large files from the S3 bucket

B

Explanation:

Option A is incorrect because increasing the memory of the Lambda function does not address the root cause of the problem, which is that the Lambda function is not triggered by the S3 event source. Increasing the memory of the Lambda function might improve its performance or reduce its execution time, but it does not affect its invocation. Moreover, increasing the memory of the Lambda function might incur higher costs, as Lambda charges based on the amount of memory allocated to the function.

Option B is correct because creating a resource policy on the Lambda function to grant Amazon S3 the permission to invoke the Lambda function for the S3 bucket is a necessary step to configure an S3 event source. A resource policy is a JSON document that defines who can access a Lambda resource and under what conditions. By granting Amazon S3 permission to invoke the Lambda function, the company ensures that the Lambda function runs when a new object is created or an existing object is modified in the S3 bucket1.

Option C is incorrect because configuring an Amazon Simple Queue Service (Amazon SQS) queue as an On-Failure destination for the Lambda function does not help with triggering the Lambda function. An On-Failure destination is a feature that allows Lambda to send events to another service, such as SQS or Amazon Simple Notification Service (Amazon SNS), when a function invocation fails. However, this feature only applies to asynchronous invocations, and S3 event sources use synchronous invocations. Therefore, configuring an SQS queue as an On-Failure destination would have no effect on the problem.

Option D is incorrect because provisioning space in the /tmp folder of the Lambda function does not address the root cause of the problem, which is that the Lambda function is not triggered by the S3 event source. Provisioning space in the /tmp folder of the Lambda function might help with processing large files from the S3 bucket, as it provides temporary storage for up to 512 MB of data. However, it does not affect the invocation of the Lambda function.

References:

Using AWS Lambda with Amazon S3

Lambda resource access permissions

AWS Lambda destinations

[AWS Lambda file system]

A company in a highly regulated industry is building an artifact by using AWS CodeBuild and AWS CodePipeline. The company must connect to an external authenticated API during the building process.

The company’s DevOps engineer needs to encrypt the build outputs by using an AWS Key Management Service (AWS KMS) key. The external API credentials must be reset each month. The DevOps engineer has created a new key in AWS KMS.

Which solution will meet these requirements?

- A . Store the API credentials in AWS Systems Manager Parameter Store. Update the key policy for the CodeBuild IAM service role to have access to the KMS key. Set CODEBUILD_KMS_KEY_ID as the new key ID.

- B . Store the API credentials in AWS Systems Manager Parameter Store. Update the key policy for the CodePipeline IAM service role to have access to the KMS key. Add the key to the pipeline.

- C . Store the API credentials in AWS Secrets Manager. Update the key policy for the CodeBuild IAM service role to have access to the KMS key. Set CODEBUILD_KMS_KEY_ID as the new key ID.

- D . Store the API credentials in AWS Secrets Manager. Update the key policy for the CodePipeline IAM service role to have access to the KMS key. Add the key to the pipeline.

C

Explanation:

The problem has two distinct requirements: securely managing rotating external API credentials and encrypting CodeBuild artifacts with a specific KMS key.

For credentials that must be reset each month and are sensitive, AWS Secrets Manager is the appropriate service, not Parameter Store. Secrets Manager provides built-in support for secret rotation, versioning, access control, and auditability. The external API credentials can be updated monthly either manually or via an automated rotation Lambda. CodeBuild can read the secret at build time by assuming an IAM role that allows secretsmanager:GetSecretValue.

To encrypt CodeBuild outputs, the correct pattern is to set the CODEBUILD_KMS_KEY_ID environment variable (or CodeBuild project setting) to the desired KMS key. The CodeBuild IAM service role must be granted kms:Encrypt, kms:Decrypt, and related actions on that KMS key via the key policy or IAM policy. This ensures build artifacts and logs are encrypted using the specified customer managed key.

Option C captures both requirements precisely: store credentials in Secrets Manager and update the KMS key policy for the CodeBuild role, not the CodePipeline role.

Options A and B misuse Parameter

Store and/or the wrong IAM principal.

Option D updates the wrong role and does not connect the KMS key to CodeBuild’s artifact encryption.

An online retail company based in the United States plans to expand its operations to Europe and Asia in the next six months. Its product currently runs on Amazon EC2 instances behind an Application Load Balancer. The instances run in an Amazon EC2 Auto Scaling group across multiple Availability Zones. All data is stored in an Amazon Aurora database instance.

When the product is deployed in multiple regions, the company wants a single product catalog across all regions, but for compliance purposes, its customer information and purchases must be kept in each region.

How should the company meet these requirements with the LEAST amount of application changes?

- A . Use Amazon Redshift for the product catalog and Amazon DynamoDB tables for the customer information and purchases.

- B . Use Amazon DynamoDB global tables for the product catalog and regional tables for the customer information and purchases.

- C . Use Aurora with read replicas for the product catalog and additional local Aurora instances in each region for the customer information and purchases.

- D . Use Aurora for the product catalog and Amazon DynamoDB global tables for the customer information and purchases.

A company deploys a web application on Amazon EC2 instances that are behind an Application Load Balancer (ALB). The company stores the application code in an AWS CodeCommit repository. When code is merged to the main branch, an AWS Lambda function invokes an AWS CodeBuild project. The CodeBuild project packages the code, stores the packaged code in AWS CodeArtifact, and invokes AWS Systems Manager Run Command to deploy the packaged code to the EC2 instances.

Previous deployments have resulted in defects, EC2 instances that are not running the latest version of the packaged code, and inconsistencies between instances.

Which combination of actions should a DevOps engineer take to implement a more reliable deployment solution? (Select TWO.)

- A . Create a pipeline in AWS CodePipeline that uses the CodeCommit repository as a source provider.

Configure pipeline stages that run the CodeBuild project in parallel to build and test the application.

In the pipeline, pass the CodeBuild project output artifact to an AWS CodeDeploy action. - B . Create a pipeline in AWS CodePipeline that uses the CodeCommit repository as a source provider. Create separate pipeline stages that run a CodeBuild project to build and then test the application. In the pipeline, pass the CodeBuild project output artifact to an AWS CodeDeploy action.

- C . Create an AWS CodeDeploy application and a deployment group to deploy the packaged code to

the EC2 instances. Configure the ALB for the deployment group. - D . Create individual Lambda functions that use AWS CodeDeploy instead of Systems Manager to run build, test, and deploy actions.

- E . Create an Amazon S3 bucket. Modify the CodeBuild project to store the packages in the S3 bucket instead of in CodeArtifact. Use deploy actions in CodeDeploy to deploy the artifact to the EC2 instances.

BC

Explanation:

Option B: Create a pipeline in AWS CodePipeline that uses the AWS CodeCommit repository as a source provider. Create separate pipeline stages that run a CodeBuild project to build and then test the application. This approach ensures a consistent and controlled build and test process. After the build and test stages, in the pipeline, pass the CodeBuild project output artifact to an AWS CodeDeploy action. CodeDeploy can manage the deployment process, ensuring that all instances are updated consistently and that the deployment only proceeds if the build and test stages are successful.

Option C: Create an AWS CodeDeploy application and a deployment group to deploy the packaged code to the EC2 instances. By using CodeDeploy, you can configure deployment strategies that ensure minimal downtime and enable features like rolling updates, which can help address inconsistencies between instances. Configuring the Application Load Balancer (ALB) for the deployment group allows for integration with the load balancer, which can manage traffic during the deployment process and help ensure zero downtime.

A company uses AWS Organizations to manage its AWS accounts. The company has a root OU that has a child OU. The root OU has an SCP that allows all actions on all resources. The child OU has an SCP that allows all actions for Amazon DynamoDB and AWS Lambda, and denies all other actions.

The company has an AWS account that is named vendor-data in the child OU. A DevOps engineer has an 1AM user that is attached to the Administrator Access 1AM policy in the vendor-data account. The DevOps engineer attempts to launch an Amazon EC2 instance in the vendor-data account but receives an access denied error.

Which change should the DevOps engineer make to launch the EC2 instance in the vendor-data account?

- A . Attach the AmazonEC2FullAccess 1AM policy to the 1AM user.

- B . Create a new SCP that allows all actions for Amazon EC2. Attach the SCP to the vendor-data account.

- C . Update the SCP in the child OU to allow all actions for Amazon EC2.

- D . Create a new SCP that allows all actions for Amazon EC2. Attach the SCP to the root OU.

C

Explanation:

The correct answer is C. Updating the SCP in the child OU to allow all actions for Amazon EC2 will enable the DevOps engineer to launch the EC2 instance in the vendor-data account. SCPs are applied to OUs and accounts in a hierarchical manner, meaning that the SCPs attached to the parent OU are inherited by the child OU and accounts. Therefore, the SCP in the child OU overrides the SCP in the root OU and denies all actions except for DynamoDB and Lambda. By adding EC2 to the allowed actions in the child OU’s SCP, the DevOps engineer can access EC2 resources in the vendor-data account.

Option A is incorrect because attaching the AmazonEC2FullAccess IAM policy to the IAM user will not grant the user access to EC2 resources. IAM policies are evaluated after SCPs, so even if the IAM policy allows EC2 actions, the SCP will still deny them.

Option B is incorrect because creating a new SCP that allows all actions for EC2 and attaching it to the vendor-data account will not work. SCPs are not cumulative, meaning that only one SCP is applied to an account at a time. The SCP attached to the account will be the SCP attached to the OU that contains the account. Therefore, option B will not change the SCP that is applied to the vendor-data account.

Option D is incorrect because creating a new SCP that allows all actions for EC2 and attaching it to the root OU will not work. As explained earlier, the SCP in the child OU overrides the SCP in the root OU and denies all actions except for DynamoDB and Lambda. Therefore, option D will not affect the SCP that is applied to the vendor-data account.

A company is using AWS Organizations and wants to implement a governance strategy with the following requirements:

AWS resource access is restricted to the same two Regions for all accounts.

AWS services are limited to a specific group of authorized services for all accounts.

Authentication is provided by Active Directory.

Access permissions are organized by job function and are identical in each account.

Which solution will meet these requirements?

- A . Establish an organizational unit (OU) with group policies in the management account to restrict Regions and authorized services. Use AWS CloudFormation StackSets to provision roles with permissions for each job function, including an IAM trust policy for IAM identity provider authentication in each account.

- B . Establish a permission boundary in the management account to restrict Regions and authorized services. Use AWS CloudFormation StackSets to provision roles with permissions for each job function, including an IAM trust policy for IAM identity provider authentication in each account.

- C . Establish a service control policy in the management account to restrict Regions and authorized services. Use AWS Resource Access Manager (AWS RAM) to share management account roles with permissions for each job function, including AWS IAM Identity Center for authentication in each account.

- D . Establish a service control policy (SCP) in the management account to restrict Regions and authorized services. Use AWS CloudFormation StackSets to provision roles with permissions for each job function, including an IAM trust policy for IAM identity provider authentication in each account.

D

Explanation:

This is a classic AWS Organizations governance design:

Restrict Regions and allowed services across all accounts

The correct Organizations mechanism for account-wide guardrails is a Service Control Policy (SCP).

SCPs set the maximum permissions that accounts/OUs can use, making them the right tool to enforce “only these Regions” and “only these services” consistently across the org.

Authentication from Active Directory + consistent job-function roles in every account

With AD as the identity source, the standard approach is federation to AWS using an IAM identity provider (SAML) and role assumption based on job function.

AWS CloudFormation StackSets is the operationally efficient way to deploy identical IAM roles/policies into multiple accounts/OUs so job-function permissions are consistent everywhere.

The roles’ trust policies can be configured to allow federated identities (from the AD-backed IdP) to assume the correct job role in each account.

Why the other options don’t fit:

A “OU with group policies” isn’t an AWS Organizations control mechanism for restricting Regions/services. The AWS-native control for this is SCPs, not “group policies.”

B Permissions boundaries are useful to limit what an IAM principal/role can do within an account, but they are not the org-wide enforcement mechanism for “all accounts must stay in these Regions / use only these services.” That’s what SCPs do.

C AWS RAM is for sharing resources (like subnets, Transit Gateway, etc.), not for “sharing roles” across accounts as a governance baseline. Also, the question emphasizes identical permissions in each account, which is best achieved by provisioning roles in each account (StackSets), not attempting to “share” roles.

A company is using an organization in AWS Organizations to manage multiple AWS accounts. The

company’s development team wants to use AWS Lambda functions to meet resiliency requirements and is rewriting all applications to work with Lambda functions that are deployed in a VPC. The development team is using Amazon Elastic Pile System (Amazon EFS) as shared storage in Account A in the organization.

The company wants to continue to use Amazon EPS with Lambda Company policy requires all serverless projects to be deployed in Account B.

A DevOps engineer needs to reconfigure an existing EFS file system to allow Lambda functions to access the data through an existing EPS access point.

Which combination of steps should the DevOps engineer take to meet these requirements? (Select THREE.)

- A . Update the EFS file system policy to provide Account B with access to mount and write to the EFS file system in Account A.

- B . Create SCPs to set permission guardrails with fine-grained control for Amazon EFS.

- C . Create a new EFS file system in Account B Use AWS Database Migration Service (AWS DMS) to keep data from Account A and Account B synchronized.

- D . Update the Lambda execution roles with permission to access the VPC and the EFS file system.

- E . Create a VPC peering connection to connect Account A to Account B.

- F . Configure the Lambda functions in Account B to assume an existing IAM role in Account A.

ADE

Explanation: