Practice Free AI-901 Exam Online Questions

HOTSPOT

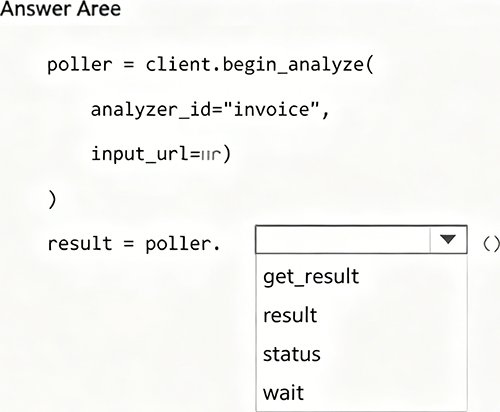

You are developing an application that analyze invoices by using Azure Content Understanding in

Foundry Tools.

You need to ensure that the application retrieves the analysis results after processing completes.

How should you complete the Python code? To answer, select the appropriate option in the answer area. NOTE: Each correct selection is worth one point.

Explanation:

The completed code is:

poller = client.begin_analyze(

analyzer_id="invoice",

input_url=url

)

result = poller.result()

Azure Content Understanding analysis uses a long-running operation pattern. The Python SDK returns a poller from begin_analyze(), and Microsoft documentation states that the SDK poller

handles polling automatically when you call .result().

Therefore, to retrieve the analysis results after processing completes, the correct option is:

result

The other options are incorrect because status checks operation state, wait waits without returning the final analysis object, and get_results is not the method shown for retrieving the begin_analyze() result in this code pattern.

HOTSPOT

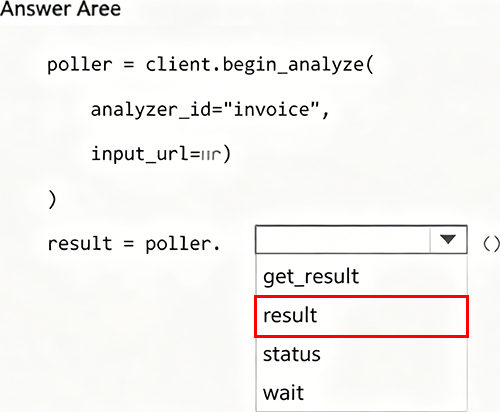

Select the answer that correctly complete the sentence.

Explanation:

The completed sentence is:

Information extraction solutions that detect and read text in scanned documents and images rely on computer vision.

Detecting and reading text in scanned documents and images is typically done with OCR, which is a computer vision capability. Microsoft describes OCR as text recognition/text extraction that extracts printed or handwritten text from images and documents.

HOTSPOT

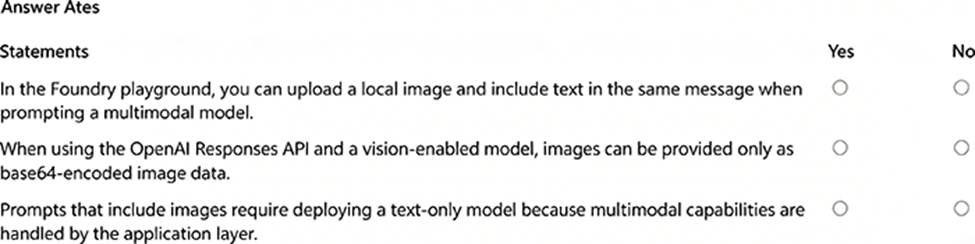

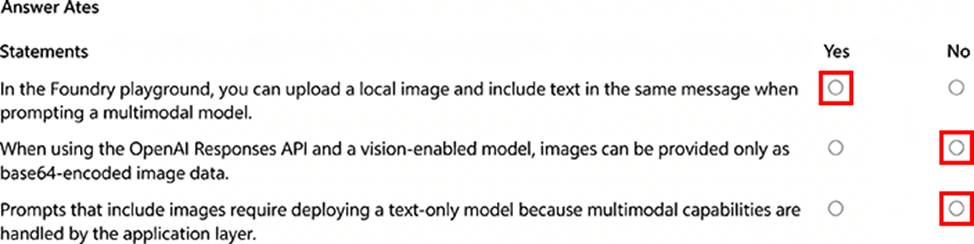

For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point.

Explanation:

Statement 1: In the Foundry playground, you can upload a local image and include text in the same message when prompting a multimodal model. = Yes

Microsoft documentation for vision-enabled chat models states that in the chat session pane, you can select the attachment button, upload an image, add a text prompt such as “Describe this image,” and then submit it.

Statement 2: When using the OpenAI Responses API and a vision-enabled model, images can be provided only as base64-encoded image data. = No

Images are not limited to base64 data. The Responses API supports image input through an image URL, and Azure OpenAI documentation also describes examples using base64 encoded image data.

Statement 3: Prompts that include images require deploying a text-only model because multimodal capabilities are handled by the application layer. = No

Image prompts require a vision-enabled multimodal model, not a text-only model. Azure OpenAI vision-capable models accept image and text inputs directly.

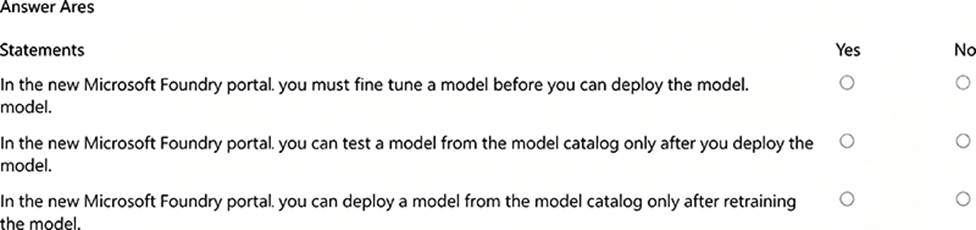

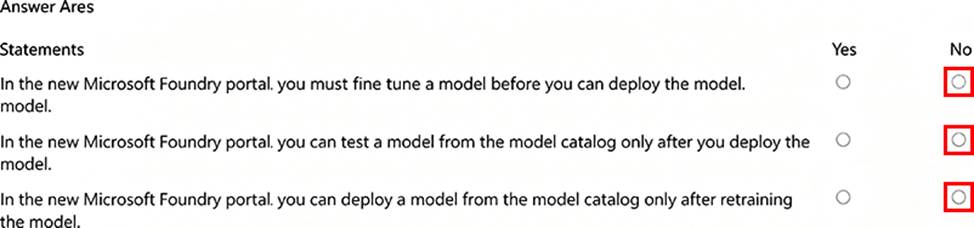

HOTSPOT

For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point.

Explanation:

Statement 1: In the new Microsoft Foundry portal, you must fine-tune a model before you can deploy the model. = No

Fine-tuning is optional. Microsoft’s Foundry model deployment documentation describes deploying Foundry Models directly from the model catalog for inference. It does not require fine-tuning first.

Statement 2: In the new Microsoft Foundry portal, you can test a model from the model catalog only after you deploy the model. = No

Microsoft documentation states that some Foundry Tools are available to try via the model catalog without a project, and Foundry playgrounds are used for prototyping and validation before production. Therefore, the statement using “only after you deploy” is too restrictive.

Statement 3: In the new Microsoft Foundry portal, you can deploy a model from the model catalog only after retraining the model. = No

Retraining/fine-tuning is not required before deployment. Microsoft states that after you deploy a Foundry Model, you can interact with it in the Foundry Playground and use it from code, and the deployment workflow starts by selecting a model from the model catalog and choosing Deploy.

You have a Microsoft Foundry project that contains a generative AI model deployment.

You test the model by using the Foundry playground.

You need to develop an application that sends requests to the deployed model.

Which information must the application include to call the model?

- A . The model training dataset

- B . The Foundry project display name

- C . The exported playground session history

- D . The model endpoint and authentication credentials

D

Explanation:

To call a deployed Azure OpenAI model from an application, the app must know the service endpoint and authenticate its request. Microsoft documentation states that Azure OpenAI supports API key authentication or Microsoft Entra ID authentication, and API key authentication requires including the API key in the request. Microsoft quickstart guidance also states that to successfully make a call against Azure OpenAI, you need an endpoint and a key.

The application does not need the model training dataset, the Foundry project display name, or exported playground session history to call the deployed model.

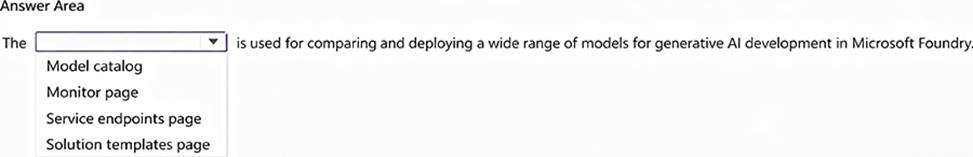

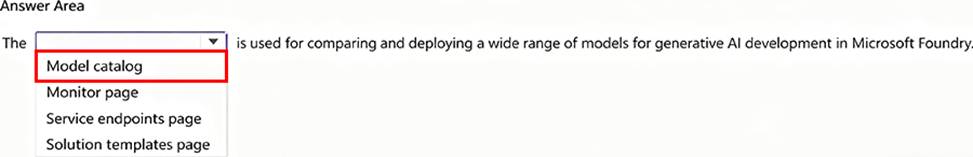

HOTSPOT

Select the answer that correctly complete the sentence.

Explanation:

The Model catalog is used for comparing and deploying a wide range of models for generative AI development in Microsoft Foundry.

Microsoft documentation states that the model catalog in Foundry portal is the hub for discovering and using a wide range of models to build generative AI applications. It also includes many models across providers such as Azure OpenAI, Mistral, Meta, Cohere, NVIDIA, and Hugging Face.

The Microsoft Learn module for Foundry models also states that the model catalog is used to explore and filter models, compare models using benchmark metrics, and deploy a model to an endpoint.

You are using the Azure Speech SDK to develop a Python application that supports real-time spoken conversations.

Which Azure speech class should you use to configure the connection to the Azure Speech service?

- A . AudioConfig

- B . SpeechSynthesizer

- C . AuditOutputConfig

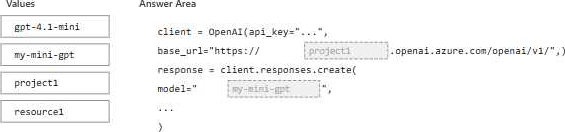

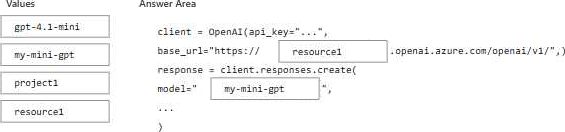

DRAG DROP

You have a Microsoft Foundry project named project1 that contains an Azure OpenAI resource named Resource1.

To Resource1, you deploy a gpt-4.1-mini model by using a model deployment named my-mini-gpt.

You need to connect to my-mini-gpt from an application.

How should you complete the Python code? To answer, drag the appropriate values to the correct targets. Each value may be used once, more than once, or not at all. NOTE: Each correct selection is worth one point.

Explanation:

client = OpenAI(

api_key="…",

base_url="https://resource1.openai.azure.com/openai/v1/",

)

response = client.responses.create(

model="my-mini-gpt",

…

)

For Azure OpenAI in Microsoft Foundry, the base_url uses the Azure OpenAI resource name in the endpoint format:

https://<resource-name>.openai.azure.com/openai/v1/

In the question, the Azure OpenAI resource is named Resource1, so the first blank must be resource1. Microsoft documentation for Azure OpenAI v1 endpoints confirms that the endpoint must use the …openai.azure.com/openai/v1/ path.

For the model parameter, Azure OpenAI requires the deployment name, not the underlying model name. Microsoft states that Azure OpenAI always requires the deployment name when calling APIs, even when the parameter is named model.

The deployed model is gpt-4.1-mini, but the deployment name is my-mini-gpt. Therefore, the second blank must be:

model="my-mini-gpt"

So the correct selections are:

base_url blank = resource1

model blank = my-mini-gpt

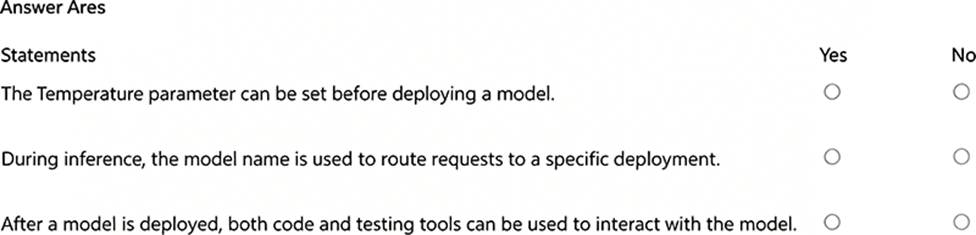

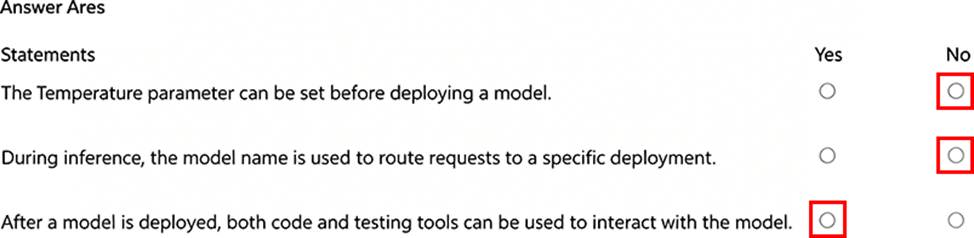

HOTSPOT

For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point.

Explanation:

Statement 1: The Temperature parameter can be set before deploying a model. = No

temperature is an inference/request parameter used when calling or testing a deployed model. It controls randomness in generated responses. It is not a required setting for deploying the model itself.

Statement 2: During inference, the model name is used to route requests to a specific deployment. = No

In Azure OpenAI / Microsoft Foundry deployments, application requests are routed to a specific deployment name, even when the SDK parameter is called model. The underlying model name, such as gpt-4.1-mini, is not what routes the request to the deployment.

Statement 3: After a model is deployed, both code and testing tools can be used to interact with the model. = Yes

After deployment, you can test the model in Foundry playground/testing tools or call the deployment from application code by using the endpoint, deployment name, and authentication credentials.

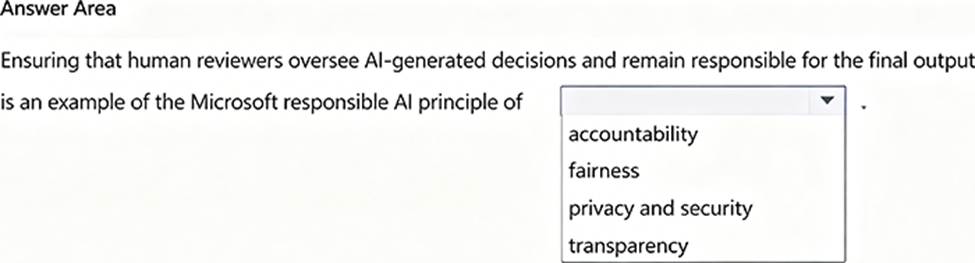

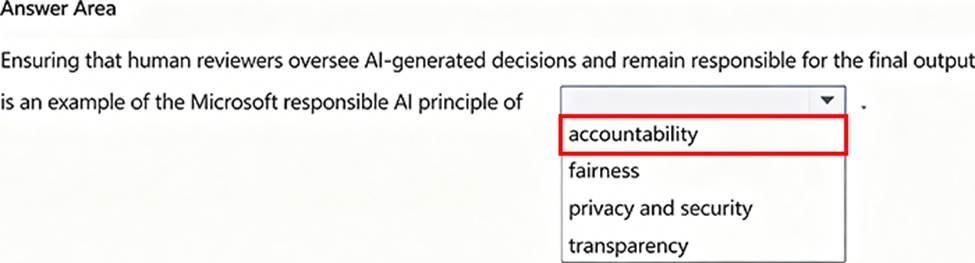

HOTSPOT

Select the answer that correctly completes the sentence.

Explanation:

The completed sentence is:

Ensuring that human reviewers oversee AI-generated decisions and remain responsible for the final output is an example of the Microsoft responsible AI principle of accountability.

Accountability means people and organizations remain responsible for AI systems and their effects.

Human review and responsibility for final decisions are examples of accountability.

The other options are incorrect:

fairness focuses on avoiding bias and treating people fairly.

privacy and security focuses on protecting data and restricting access.

transparency focuses on explaining AI use, capabilities, and limitations.