Practice Free 3V0-25.25 Exam Online Questions

An administrator has a vSphere 8 Update 1a with NSX 4.1.0.2 environment.

What option can the administrator use to converge this vSphere with NSX environment into a VMware Cloud Foundation (VCF) Workload Domain?

- A . Use the VCF installer to automatically converge the vSphere with NSX environment into a new VCF Workload Domain.

- B . Upgrade NSX to version 9 into the vSphere 8 environment and use the VCF installer to converge the vSphere 8 with NSX environment into a new VCF Workload Domain.

- C . Upgrade the environment version and use the VCF installer to converge the vSphere environment into a new VCF Workload Domain.

- D . Upgrade the environment and use VCF Operations to converge the vSphere environment into a new VCF Workload Domain.

A

Explanation:

Comprehensive and Detailed 250 to 350 words of Explanation From VMware Cloud Foundation (VCF) documents:

The process of transforming an existing, "brownfield" environment into a VCF-managed infrastructure is known as Convergence. In VCF 5.x and the advancements found in VCF 9.0, VMware provides the VCF Import Tool (often bundled or utilized alongside the VCF Installer/Cloud Builder) specifically for this purpose.

An environment running vSphere 8 Update 1a and NSX 4.1.0.2 is within the supported compatibility matrix for VCF 5.x convergence. The most direct and verified method (Option A) is to use the VCF Installer to "ingest" the existing vCenter and NSX Manager. During this process, the installer validates the current configuration, ensures the hosts are compatible, and then brings them under the management of a newly deployed SDDC Manager.

One of the significant advantages of this approach is that it avoids the need for a "rip and replace" of the existing networking. The VCF Installer identifies the existing NSX Manager and the logical networking constructs. Once the convergence is successful, the environment is treated as a standard VCF Workload Domain.

Options B and C are incorrect because VCF’s design principle is to perform the convergence at a known stable and compatible version before using the SDDC Manager’s Lifecycle Management (LCM) to perform upgrades. Manually upgrading to version 9 prior to convergence can introduce configuration drifts that the VCF Installer may not be able to reconcile.

Option D is incorrect as VCF Operations (formerly vRealize Operations) is a monitoring and optimization tool; it does not have the administrative capability to perform the structural convergence of the SDDC stack. Therefore, the automated convergence via the VCF Installer is the correct architectural path.

HOTSPOT

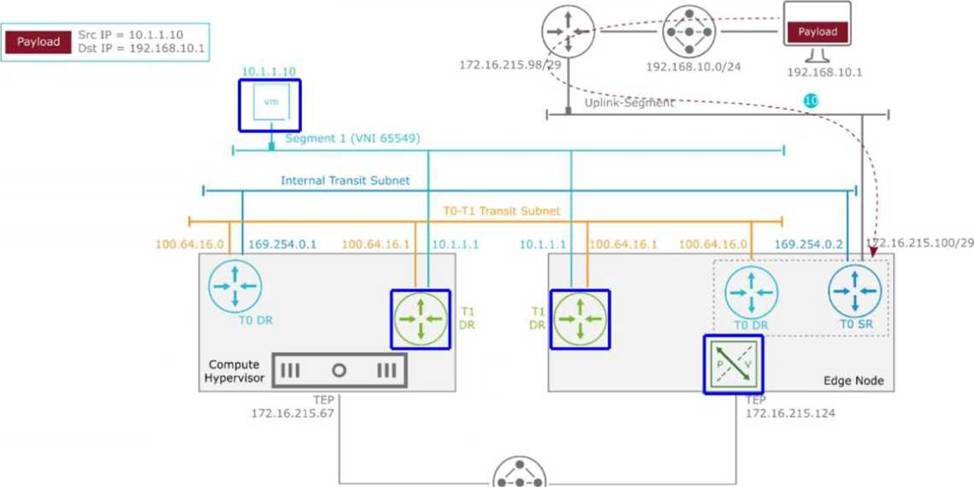

An administrator is troubleshooting the packet flow of an incoming response to an ICMP Reply payload destined for 10.1.1.10 in the diagram.

The packet arrived at the Tier-0 SR at 172.16.215.100/29.

Which highlighted location identifies the next hop in the path to the destination?

Comprehensive and Detailed 250 to 350 words of Explanation From VMware Cloud Foundation (VCF) documents: In a VMware Cloud Foundation (VCF) environment, North-South traffic flows through a hierarchical routing structure composed of Tier-0 and Tier-1 Gateways. Each gateway is further divided into a Distributed Router (DR) component, which runs as a kernel module on all Transport Nodes (ESXi and Edges), and a Service Router (SR), which provides centralized services and resides on the Edge Nodes.

According to the packet walk logic for an incoming (North-to-South) packet, once the traffic arrives from the physical router at the Tier-0 Service Router (SR) on the Edge Node, it must be routed toward the destination virtual machine (10.1.1.10). In a multi-tier NSX architecture, the Tier-0 SR identifies that the destination subnet belongs to a connected Tier-1 Gateway. The communication between the Tier-0 and Tier-1 gateways occurs over an internal transit subnet, often referred to as the Router Link (in this diagram, represented by the 100.64.16.0/31 subnet).

The "Next Hop" for the packet currently residing at the Tier-0 SR on the Edge Node is the Tier-1 Distributed Router (DR) instance located on that same Edge Node. This is because the Edge Node participates as a Transport Node in the overlay and maintains local instances of all Distributed Routers to ensure efficient path processing. After the packet is processed by the local Tier-1 DR on the Edge Node, it determines that the destination VM is residing on a remote host (Compute Hypervisor). Only then is the packet encapsulated in a Geneve header and sent via the Tunnel Endpoints (TEP) from the Edge Node (172.16.215.124) to the Compute Hypervisor (172.16.215.67). Therefore, the Tier-1 DR on the Edge Node is the immediate logical next step in the routing pipeline before any host-to-host encapsulation occurs.

An administrator is troubleshooting east―west network performance between several virtual machines connected to the same logical segment. The administrator inspects the internal forwarding tables used by ESXi and notices that different tables exist for MAC and IP mapping.

Which table on an ESXi host is used to determine the location of a particular workload for frame forwarding?

- A . ARP Table

- B . FIP Table

- C . TEP Table

- D . MAC Table

D

Explanation:

Comprehensive and Detailed 250 to 350 words of Explanation From VMware Cloud Foundation (VCF) documents:

In the context of VMware Cloud Foundation (VCF) networking, understanding how an ESXi host (acting as a Transport Node) handles East-West traffic is fundamental. East-West traffic refers to communication between workloads within the same data center, often on the same logical segment.

When a Virtual Machine sends a frame to another VM on the same logical segment, the ESXi host’s virtual switch must determine the "location" of the destination MAC address to perform frame forwarding. The MAC Table (also known as the Forwarding Table or L2 Table) is the primary structure used for this decision. For each logical segment, the host maintains a MAC table that maps the MAC addresses of virtual machines to their specific "locations."

If the destination VM is residing on the same host, the MAC table points the frame toward a specific internal port (vUUID) associated with that VM’s vNIC. If the destination VM is on a different host (in an overlay environment), the MAC table entry for that remote MAC address will point to the Tunnel End Point (TEP) IP of the remote ESXi host. While the TEP table (Option C) contains the list of known Tunnel Endpoints and the ARP table (Option A) maps IP addresses to MAC addresses, neither is the primary table used for the final frame forwarding decision.

The MAC Table is the authoritative source for Layer 2 forwarding. In an NSX-managed VCF environment, these tables are dynamically populated and synchronized via the Local Control Plane (LCP), which receives updates from the Central Control Plane. This ensures that even as VMs move via vMotion, the MAC table remains updated across all transport nodes, allowing for seamless East-West connectivity without the need for traditional MAC learning (flooding) in the physical fabric.

An administrator has a standalone vSphere 8.0 Update 1a deployment that is running with VMware NSX 4.1.0.2 and has to converge the deployment into a new VMware Cloud Foundation (VCF) instance.

How can the administrator accomplish this task?

- A . Manually upgrade both vSphere and NSX to version 9 prior to converging. Then use the VCF Installer to converge the vSphere 9 and NSX 9 instances into a new VCF management domain.

- B . Manually upgrade vSphere to version 9. Then use the VCF Installer to converge the vSphere 9 environment into a new VCF management domain. Then use the VCF lifecycle management tools to upgrade NSX to version 9.

- C . Use the VCF Installer to converge the existing vSphere 8 and NSX 4 environment into a new VCF management domain. Then use the VCF lifecycle management tools to upgrade to 9.

- D . Manually upgrade vSphere to version 9 and uninstall NSX 4. Then use the VCF Installer to converge the vSphere 9.0 environment into a new VCF management domain at which time NSX 9 will be reinstalled.

C

Explanation:

Comprehensive and Detailed 250 to 350 words of Explanation From VMware Cloud Foundation (VCF) documents:

The process of bringing existing infrastructure under VCF management is known as "VCF Import" or "Convergence." This is a common path for organizations transitioning from siloed management to the full SDDC stack provided by Cloud Foundation.

According to the VCF 5.x and 9.0 documentation, the VCF Installer (specifically the Cloud Foundation Builder and the Import Tool) is designed to ingest existing environments. The verified best practice is

to converge the environment at its current, supported version, provided it meets the minimum baseline requirements for the VCF version you are deploying.

In this scenario, vSphere 8.0 U1 and NSX 4.1 are compatible versions that can be imported into a VCF management framework. By using the VCF Installer to converge the existing environment first (Option C), the SDDC Manager takes ownership of the existing vCenter and NSX Manager. Once the environment is "VCF-aware," the administrator gains the benefit of SDDC Manager’s Lifecycle Management (LCM).

The SDDC Manager then handles the orchestrated, multi-step upgrade to version 9.0. This ensures that the automated "Bill of Materials" (BOM) is strictly followed, ensuring compatibility between vCenter, ESXi, and NSX components. Attempting to manually upgrade components to version 9 before convergence (Options A and B) or uninstalling NSX (Option D) creates a "Frankenstein" environment that may not align with the VCF BOM, making the automated convergence process fail or resulting in an unsupported configuration. The principle of VCF is to bring the environment in first, then let VCF manage the upgrades.

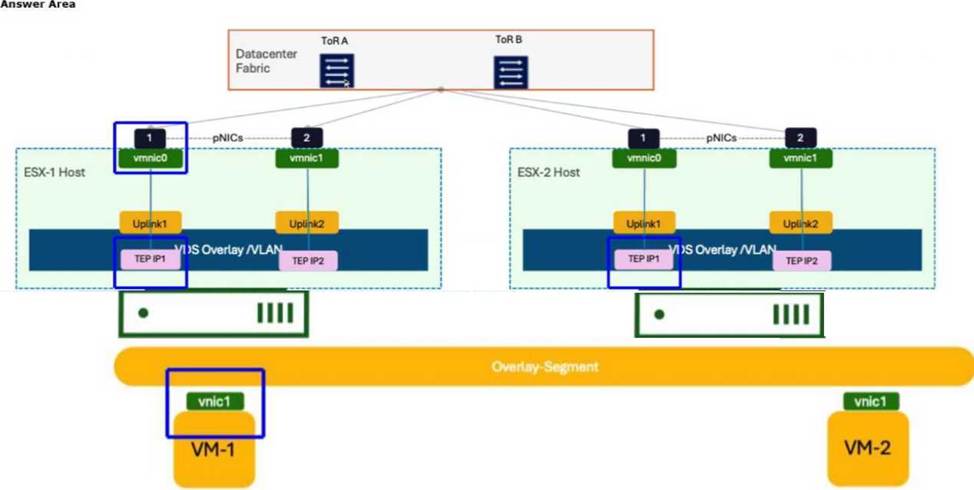

HOTSPOT

The administrator is working to ascertain the encapsulation of GENEVE by reviewing the capture on Wireshark.

The administrator instructed VM-1 to send a continuous ICMP request directed at VM-2.

Click to highlight where the administrator should observe the GENEVE encapsulated packet.

In a VMware Cloud Foundation (VCF) environment, the GENEVE (Generic Network Virtualization Encapsulation) protocol is the industry-standard tunnel format used by NSX to create an overlay network. This protocol allows Layer 2 traffic from virtual machines to be "tunneled" over a Layer 3 physical IP fabric, enabling workloads to communicate as if they were on the same segment even when separated by physical routers.

When VM-1 on ESX-1 sends an ICMP request to VM-2 on ESX-2, the packet starts as a standard Ethernet frame at the virtual machine’s vnic1. At this stage, the packet contains no encapsulation. As the frame enters the Virtual Distributed Switch (VDS) and hits the Tunnel End Point (TEP), the host’s kernel performs the encapsulation process. The TEP adds a GENEVE header, a UDP header (port 6081), and an outer IP header.

The vmnic0 (physical NIC) on the source host (ESX-1) is the specific "egress" point where this transformation is complete. A packet capture taken at this physical interface will show the "Outer IP" address of the source TEP and destination TEP, with the original ICMP packet hidden inside the GENEVE payload. If the administrator were to click on the VM’s vnic, they would only see standard ICMP. By selecting the vmnic0, the administrator captures the traffic as it is placed onto the physical wire, which is the verified location to troubleshoot MTU issues, encapsulation errors, or physical

fabric connectivity in a VCF environment.

The administrator must configure Border Gateway Protocol (BGP) on the Tier-0 Gateway to establish neighbor relationships with upstream routers.

Which two statements describe the Border Gateway Routing Protocol (BGP) configuration on a Tier-0 Gateway? (Choose two.)

- A . EIGRP is configured by default.

- B . Can be used as an Exterior Gateway Protocol.

- C . The network is divided into areas that are logical groups.

- D . It supports a 4-byte autonomous system number.

B, D

Explanation:

Comprehensive and Detailed 250 to 350 words of Explanation From VMware Cloud Foundation (VCF) documents:

In the architecture of VMware Cloud Foundation (VCF) and its networking component, NSX, the Tier-0 Gateway serves as the critical demarcation point between the virtualized overlay network and the physical infrastructure. To facilitate this communication, BGP is the industry-standard protocol utilized.

BGP is fundamentally designed as an Exterior Gateway Protocol (EGP). While it can be used internally (iBGP), its primary role in a VCF deployment is to exchange routing information between the SDDC and the physical Top-of-Rack (ToR) switches or core routers (eBGP). This allows the physical network to learn about the virtual subnets (overlay segments) and allows the virtual environment to receive a default route or specific external prefixes. This confirms that BGP is utilized as an EGP in these designs.

Furthermore, as global IP networking has evolved, the traditional 2-byte Autonomous System (AS) numbers (ranging from 1 to 65,535) were found to be insufficient for the number of organizations requiring them. Modern NSX versions integrated into VCF 5.x and 9.0 fully support 4-byte Autonomous System numbers (ranging from 1 to 4,294,967,295). This support is essential for service providers and large enterprises that have been assigned 4-byte ASNs by regional internet registries.

Option A is incorrect because EIGRP is a proprietary Cisco protocol and is not used by NSX.

Option C describes OSPF (Open Shortest Path First), which uses "Areas," whereas BGP uses "Autonomous Systems." Therefore, the ability to act as an EGP and support for 4-byte ASNs are the verified characteristics of BGP within the VCF networking stack.

An administrator is investigating reports that several Virtual Machines (VMs) deployed on an NSX virtual network segment are dropping packets. To troubleshoot the issue the administrator has attached two test VMs to the virtual network in order to inspect the packets sent between the two test VMs.

What tool will allow the administrator to analyze the packet flow?

- A . Flows Monitoring in the VCF Operations UI.

- B . Traceflow in the NSX Manager UI.

- C . Port Mirroring in the NSX Manager UI.

- D . Live Traffic Analysis in the NSX Manager UI.

B

Explanation:

Comprehensive and Detailed 250 to 350 words of Explanation From VMware Cloud Foundation (VCF) documents:

In a VMware Cloud Foundation (VCF) environment, pinpointing the exact location of packet drops within the software-defined data center requires tools that can see into the logical forwarding pipeline. While traditional networking tools like pings only provide a "binary" up/down status, Traceflow is the definitive diagnostic tool within the NSX Manager UI for deep packet path analysis.

Traceflow works by injecting a synthetic "trace packet" into the data plane, originating from a source vNIC of a specific VM. This packet is uniquely tagged so that every NSX component it touches― including the Distributed Switch (VDS), Distributed Firewall (DFW) rules, Distributed Routers (DR), and Service Routers (SR) on Edge nodes―reports back an observation.

When an administrator observes packet drops, Traceflow provides a step-by-step visualization of the packet’s journey. If the packet is dropped, Traceflow will explicitly identify the component responsible. For example, it might show that the packet was "Dropped by Firewall Rule #102" or "Dropped by SpoofGuard." It can also identify if the packet was lost during Geneve encapsulation or at the physical uplink interface.

Option A (Flows Monitoring) is useful for long-term traffic patterns and session statistics but lacks the packet-level "hop-by-hop" granular detail provided by Traceflow.

Option C (Port Mirroring) is used to send a copy of traffic to a physical or virtual appliance (like a Sniffer or IDS), which is more complex to set up and usually reserved for external deep packet inspection (DPI) rather than internal path troubleshooting.

Option D (Live Traffic Analysis) is a broader term, but within the context of the NSX troubleshooting toolkit for "packet flow analysis" between two points, Traceflow is the verified and documented solution for verifying the logical path and identifying drops.

An NSX Manager cluster has failed. The administrator deployed a new NSX Manager using the latest version and attempted to restore from a backup, but the restore operation failed.

What would an administrator do to recover the cluster?

- A . Edit the backup passphrase to match the new build.

- B . Use SDDC Manager to replace NSX Manager.

- C . Use the NSX restore API instead of the UI.

- D . Deploy an NSX Manager that matches the backup’s build.

D

Explanation:

Comprehensive and Detailed 250 to 350 words of Explanation From VMware Cloud Foundation (VCF) documents:

A critical requirement for the backup and restore process in VMware NSX (and by extension, VCF) is version parity. The NSX Manager backup contains the database schema, configuration files, and state information specific to the version of the software that was running at the time the backup was taken.

When performing a restore into a "clean" environment, the NSX documentation explicitly states that the target NSX Manager appliance must be of the exact same build version as the appliance that generated the backup. If an administrator attempts to restore a backup from version 4.1.x onto a newly deployed manager running version 4.2.x or 9.0 (as implies by "latest version"), the restore process will fail because the database schema of the newer version is incompatible with the older data structure.

In a VCF environment, while SDDC Manager (Option B) handles the lifecycle and replacement of failed nodes, the actual "Restore from Backup" workflow is an NSX-native operation. If the entire cluster is lost, the recovery procedure involves:

Identifying the build number from the backup metadata.

Deploying a single "Discovery" node of that exact build.

Pointing that node to the backup repository (SFTP/FTP).

Executing the restore.

Once the primary node is restored to the correct version, the administrator can then add additional nodes to reform the cluster. Attempting to use the API (Option C) or changing the passphrase (Option A) will not bypass the fundamental requirement for version alignment between the backup file and the installed binary.

An administrator has a VMware Cloud Foundation (VCF) instance. A critical NSX security update has been released by Broadcom.

How can the administrator install the NSX update?

- A . Download the NSX patch to the NSX Manager. Apply it using VCF Operations Fleet Management.

- B . Download the NSX patch to VCF Operations. Apply it using NSX Manager.

- C . Download the NSX patch to VCF Operations. Apply it using VCF Operations Fleet Management.

- D . Download the NSX patch to the NSX Manager. Apply it using NSX Manager.

C

Explanation:

Comprehensive and Detailed 250 to 350 words of Explanation From VMware Cloud Foundation (VCF) documents:

In the unified architecture of VMware Cloud Foundation (VCF) 9.0, the management paradigm has shifted towards a more centralized "Fleet Management" approach. Historically, in VCF 4.x and 5.x, updates were primarily managed via the SDDC Manager using the Lifecycle Management (LCM) engine. However, with the integration advancements in version 9.0, VCF Operations (formerly part of the Aria/vRealize suite) has taken on a more direct role in the orchestration of updates across the entire VCF "Fleet."

To comply with the VCF operational model, administrators no longer apply patches directly within the component managers (like NSX Manager or vCenter) if they wish to remain within the supported, automated framework. Instead, the workflow begins by downloading the bundle or patch to VCF Operations. This ensures that the update is validated against the current Bill of Materials (BOM) and that all dependencies―such as compatibility with the underlying ESXi versions or the management vCenter―are checked before any changes are committed.

Once the patch is available in VCF Operations, the administrator utilizes Fleet Management to apply it. This service orchestrates the update across all NSX Managers and Transport Nodes (Edges and Hosts) in a controlled, non-disruptive manner. If the administrator were to apply the patch directly in NSX Manager (Option D), the SDDC Manager and VCF Operations databases would go out of sync, leading to a "configuration drift" where the system no longer knows which version is actually running, potentially breaking future automated lifecycle tasks. Therefore, the centralized download and application through VCF Operations Fleet Management is the verified procedure for maintaining a healthy and compliant VCF 9.0 environment.