Practice Free 3V0-24.25 Exam Online Questions

An administrator is updating a VMware vSphere Kubernetes Service (VKS) cluster by editing the cluster manifest. When saving, there is no indication that the edit was successful.

Based on the scenario, what action should the administrator take to edit and apply changes to the manifest?

- A . Define the KUBE_EDITOR or EDITOR environment variable.

- B . Verify the account editing the cluster manifest has appropriate permissions.

- C . Ensure the file permissions are set to read-write.

- D . Restart the VKS services and edit the file again.

A

Explanation:

The documented behavior of kubectl edit is that it opens the object manifest in a local text editor, and the editor it launches is controlled by environment variables. In the VCF 9.0 documentation (Workload Management / Supervisor operations), the procedure explicitly states: “The kubectl edit command opens the namespace manifest in the text editor defined by your KUBE_EDITOR or the EDITOR environment variable.”

If the administrator’s environment does not have KUBE_EDITOR or EDITOR defined (or they point to an invalid/interactive-less editor in the current session), kubectl edit may not open the expected editor workflow, and the admin may not see the normal confirmation after saving and exiting. Setting KUBE_EDITOR (preferred for kubectl-specific behavior) or EDITOR ensures kubectl launches a known-good editor, allowing the manifest to be modified, saved, and then submitted back to the API server when the editor exits. The same documented requirement also applies to editing other Kubernetes objects (such as a VKS cluster manifest) because the kubectl edit mechanism is identical.

How should an administrator enable autoscaling for a vSphere Kubernetes Service (VKS) cluster?

- A . Update the NodePool YAML to enable the autoscaling feature.

- B . Create a VKS cluster with autoscaler annotations.

- C . Create a NodePool with autoscaling enabled.

- D . Install the Cluster Autoscaler (standard package) for the cluster environment.

D

Explanation:

In VCF 9.0, cluster autoscaling is delivered as anoptionalcapability that requires installing theCluster Autoscaleras a standard package. The VCF 9.0 materials explicitly call out Cluster Autoscaler as an optionally installed package for vSphere Kubernetes Service, alongside other optional packages (for example, Harbor, Velero, Istio, etc.). The release information further emphasizes that autoscaling features (including newer behaviors such as scaling from/to zero for supported VKr versions) require that “the autoscaler standard package” be installed.

Operationally, installing the autoscaler package provides the controller that watches pending pods and node utilization signals and then drives the required changes in desired worker capacity. After that controller is present, you typically express scaling intent through the cluster’s declarative configuration (for example, worker pool/node pool constraints and limits) so the autoscaler can act within the boundaries you define. Without the autoscaler package, changing replica counts or expecting automatic node growth/shrink will not produce autoscaling behavior because the control loop that performs those actions is missing.

An administrator is modernizing the internal HR and payroll applications using vSphere Kubernetes Service (VKS). The applications are composed of multiple microservices deployed across Kubernetes clusters, fronted by Ingress controllers that route user traffic through Avi Kubernetes Operator. During testing, it is discovered that manually creating and renewing TLS certificates for each Ingress resource is error-prone and leads to periodic outages when certificates expire. The requirements also mandate that all application endpoints use trusted certificates issued through the corporate certificate authority (CA) with automatic renewal and rotation.

Which requirement can be met by using cert-manager?

- A . Routing requests based on HTTP headers.

- B . Generating certificates by connecting only to external services.

- C . Adding certificates and certificate issuers as resource types in Kubernetes clusters.

- D . Scanning container images stored in Harbor.

C

Explanation:

cert-manager addresses the operational risk described (manual creation/renewal causing outages) by making certificate lifecycle management a native, declarative Kubernetes workflow. Instead of treating TLS certificates as manually managed files, cert-manager extends the Kubernetes API with custom resources such as Certificate, Issuer, and Cluster Issuer, so certificates and their issuing policies become first-class objects that can be version-controlled and automatically reconciled. This directly satisfies the requirement to use trusted

certificates issued through the corporate CA, because an Issuer/Cluster Issuer can represent that corporate CA integration and define how certificate requests are fulfilled. Once configured, cert-manager continuously monitors certificate validity and automatically renews and rotates certificates before expiration, then updates the referenced Kubernetes Secrets so Ingress endpoints remain protected without human intervention. In a vSphere Supervisor / VKS environment, VMware also uses cert-manager on the Supervisor for automated certificate rotation in platform integrations (for example, rotating certificates used by monitoring components), reinforcing the model of automated rotation rather than manual certificate handling.

Which feature in VMware vSphere Kubernetes Service (VKS) provides vSphere storage policy integration that supports provisioning persistent volumes and their backing virtual disks?

- A . Cloud storage provider

- B . vSphere Cloud Native Storage (CNS)

- C . Container Storage Interface (CSI)

- D . Cloud storage implementation

B

Explanation:

VCF 9.0 describes Cloud Native Storage (CNS) on vCenteras the component that implements “provisioning and lifecycle operations for persistent volumes.” When provisioning persistent volumes, CNS “interacts with the vSphere First Class Disk functionality to create virtual disks that back the volumes,” and it “communicates with Storage Policy Based Management to guarantee a required level of service to the disks.” This is exactly the storage-policy-to-backed-virtual-disk relationship the question is testing: storage policies (via policy-based management) define requirements, and CNS is the vCenter-side service that applies those requirements while creating and managing the backing storage objects.

In contrast,CSI (including Supervisor CNS-CSI and VKS pvCSI) is the Kubernetes-facing interface/driver used to request and consume storage, but it does not “provide” the vSphere storage policy system; rather, it relies on CNS/CNS-CSI and vCenter services to fulfill provisioning requests. Therefore, vSphere Cloud Native Storage (CNS)is the correct choice.

An administrator must create amulti-zone vSphere Supervisor deployment in a VMware Cloud Foundation (VCF) environment.

What is the primary purpose of this configuration?

- A . To create isolated security domains using NSX micro-segmentation.

- B . To enable cross-site vSAN stretched clusters for data replication between data centers.

- C . To provide high availability for the Supervisor Cluster and vSphere Kubernetes clusters.

- D . To simplify the management of network pools and IP address ranges.

C

Explanation:

Amulti-zone Supervisorin VCF 9.0 is designed to deliver platform resiliency and high availability at the vSphere cluster (zone) failure-domain level. The VCF 9.0 documentation states that a multi-zone Supervisor “leverages three vSphere clusters” (each mapped to a vSphere Zone) and that these zones are used by both “workloads and Supervisor management components to deliver high availability,” exposing “each cluster as an independent, consumable availability zone,” resulting in a “resilient, HA-capable platform.”

This is reinforced in the vSphere Zones guidance: deploying the Supervisor on three vSphere Zones spreads the control plane VMs across three zones, providing “cluster-level high availability” that protects the Supervisor control plane against asingle cluster-level failure(one control plane VM per management zone).

Because VKS (vSphere Kubernetes Service) runs on Supervisor, distributing Supervisor control plane and workload placement across zones improves overall availability of Supervisor services and Kubernetes consumption in that Supervisor instance.

What is the purpose of the VMware vSphere Kubernetes Service (VKS) Service Mesh?

- A . Provides service discovery across multiple clusters.

- B . Provides an infrastructure layer that makes communication between applications possible, structured, and observable.

- C . Provides dynamic application load balancing and autoscaling across multiple clusters and multiple sites.

- D . Provides a centralized, global routing table to simplify and optimize traffic management.

B

Explanation:

A service mesh is an application communication layer that standardizes service-to-service traffic inside Kubernetes. Instead of each development team building custom logic for retries, timeouts, encryption, and telemetry, the mesh provides these capabilities consistently across workloads. This is typically done by inserting a data plane (often sidecar proxies or node-level proxies) that intercepts inbound and outbound traffic for each microservice, plus a control plane that distributes configuration and identity material.

The key outcomes align directly to option B: communication becomes possible (reliable connectivity patterns), structured (consistent routing rules, policies, and identity), andobservable (metrics, logs, and distributed tracing for east-west traffic). A service mesh commonly adds controls such asmTLS encryption, fine-grainedtraffic policy (allow/deny, rate limits, circuit breaking), and progressive delivery patterns (canary/blue-green) without changing application code.

By contrast, service discovery (A) is usually a built-in Kubernetes function, load balancing/autoscaling across sites (C) is not the primary definition of a service mesh, and a single centralized global routing table (D) is not how meshes are typically described or implemented.

What Kubernetes object is used to grant permissions to acluster-wideresource?

- A . RoleReference

- B . RoleBinding

- C . ClusterRoleBinding

- D . ClusterRoleAccess

C

Explanation:

In Kubernetes RBAC, cluster-wide permissions are defined withClusterRoleand granted to a user, group, or service account by creating aClusterRoleBinding. The VCF 9.0 documentation for VKS cluster access describes the RBAC workflow used to grant access: first you “define a Role or ClusterRolefor the user or group,” and then you “create a RoleBinding or ClusterRoleBindingfor the user or group and apply it to the cluster.” This wording reflects the RBAC distinction:RoleBindingis scoped to a namespace, whereasClusterRoleBindingis used when the permissions must apply at thecluster scope (cluster-wide resources and/or across namespaces).

VCF 9.0 further illustrates the purpose of ClusterRoleBinding in a token-auth example: it lists the required objects, including “ClusterRole: This defines the access to the Kubernetes cluster” and “ClusterRoleBinding: This binds the created Service Account with the defined ClusterRole.” That binding step is what grants the subject the cluster-level privileges defined in the ClusterRole, makingClusterRoleBindingthe correct object for permissions to cluster-wide resources.

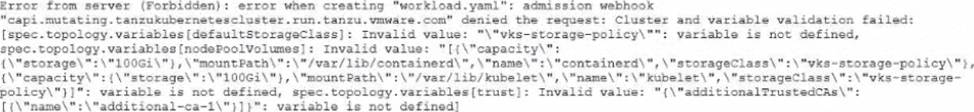

An administrator is upgrading to VKS 3.4 and encounters the following error during cluster creation using workload, yami:

How should the administrator resolve this issue to successfully complete the upgrade"?

- A . Verify workload cluster versions to ensure compatibility

- B . Remove the deprecated variables and apply the new workload, yaml.

- C . Rename the vSphere storage policy and apply the new workload.yami.

- D . Restart the Kubernetes services and restart the upgrade

B

Explanation:

The error shows an admission webhook denial where variable validation failed and multiple entries under spec. topology.variables[…] are reported as“variable is not defined”. That message indicates the manifest is supplying variables that arenot part of the current Cluster API / topology schema enforced by the Supervisor

during cluster creation. In VKS, cluster provisioning is declarative: you invoke the VKS API withkubectl + a YAML file, and “after the cluster is created, you update the YAML to update the cluster.” When the API /schema changes between releases, older manifests can contain fields/variables that are no longer recognized, and the admission webhook blocks them to prevent creating an invalid cluster spec.

This aligns with VMware’s broader direction that the olderTanzuKubernetesCluster (TKC) API was deprecated and customers are encouraged to useCluster APIfor bootstrap/config/lifecycle management. In practice, to complete the upgrade/creation successfully, you must update the cluster manifest to match the supported schema: remove the deprecated/unknown topology variablesshown in the error (for example, the undefined storage-policy and trust variables) and re-apply the correctedworkload.yaml.

What is the function of Contourin a VMware vSphere Kubernetes Service (VKS) cluster?

- A . Providing an ingress controller to expose services to external users.

- B . Monitoring the health and performance of the underlying infrastructure.

- C . Managing the lifecycle and patching of VKS cluster nodes.

- D . Providing persistent storage for stateful applications.

A

Explanation:

In VCF 9.0, ingress is described as part of VKS cluster networking. The documentation’s VKS Cluster Networking table lists “Cluster ingress” and identifies its role asrouting inbound pod traffic. It further clarifies that this function is delivered by athird-party ingress controller, and explicitly names Contouras an example (“you can use any third-party ingress controller, such as Contour”).

That mapping is exactly what option Adescribes: Contour is deployed to provideingresscapabilities so that inbound requests from outside the cluster can be routed to Kubernetes services and pods according to ingress rules. In other words, Contour is not a storage component (that would align to CSI/CNS/pvCSI), not a node lifecycle manager (that is handled by VKS/Cluster API/VM Service), and not an infrastructure health monitoring tool (that would be metrics/observability tooling). VCF 9.0 positions Contour specifically within theingresspart of the networking feature set, makingAthe correct answer.

An administrator is deploying vSphere Kubernetes Service (VKS) on a VMware Cloud Foundation workload domain to support a new internal AI and data analytics platform. The environment must host both virtual machine (VM) applications and containerized workloads while maintaining a unified networking and security model through NSX. The design documentation outlines the requirements for the Supervisor infrastructure components.

What three components form the foundation of a VMware vSphere Kubernetes Service (VKS) Supervisor deployment? (Choose three.)

- A . Cluster API

- B . NSX Manager virtual machine

- C . Supervisor control plane virtual machine

- D . vCenter Virtual Distributed Switch

- E . Virtual Machine Service

- F . NSX Advanced Load Balancer Controller virtual machine

B C F

Explanation:

VCF 9.0 describes Supervisor networking with NSX as a model where NSX provides network connectivity to Supervisor control plane VMs, services, and workloads, and where the Supervisor can use either the NSX Load Balancer or the Avi Load Balancer. In the NSX + Avi design, the documentation identifies the Avi Load Balancer Controlleras a core infrastructure element: the Controller “interacts with vCenter to automate the load balancing for the VKS clusters,” and is responsible for provisioning and coordinating Service Engines and exposing operational interfaces.

Also, the Supervisor itself is anchored by the Supervisor control plane virtual machines. VCF 9.0 explains you deploy the Supervisor with one or three control plane VMs, and in a three-zone Supervisor the control plane VMs are distributed across zones for high availability.

Finally, because the requirement explicitly calls for a unified networking/security model through NSX, the NSX Manager virtual machineis foundational to the NSX-based Supervisor design, as shown in the documented architecture and component descriptions for NSX-backed Supervisor deployments.